Rumors, deceptions and outright lies have always plagued the business world. Today, however, the fallout from deepfakes and other AI-generated content is instant and measurable. A viral moment can crater sales, damage a brand and rattle investors. A spoofed voice or video can convince an employee to transfer millions of dollars to a nonexistent “customer.”

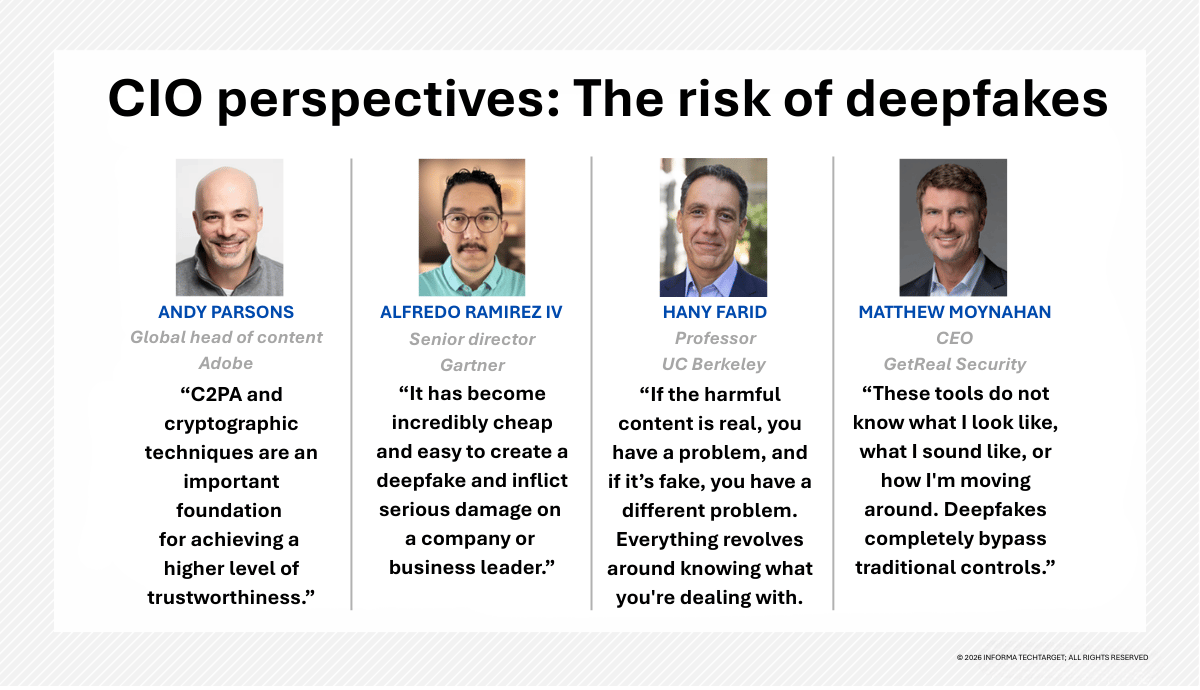

“It has become incredibly cheap and easy to create a deepfake and inflict serious damage on a company or business leader,” said Alfredo Ramirez IV, a senior director in Gartner’s emerging technologies and trends security division. “The advent of consumer-grade AI generation tools has created a very low barrier to entry.”

Attacks are more common and more sophisticated. According to Gartner, 62% of organizations have experienced a deepfake attack involving social engineering in the past 12 months. “The enterprise is emerging as a massive target,” said Hany Farid, a professor of electrical engineering and computer sciences at the University of California, Berkeley School of Information.

For CIOs and CISOs, the challenges — and risks — are growing, Farid said. It’s critical to evolve to more advanced technical controls along with other tools and processes that dial down risks. This trust-based infrastructure – an evolution toward zero trust 2.0 — verifies identity, provenance and intent at the precise moment it matters.

“Knowing who and what is real and what is AI-generated is critical. Reacting quickly to attacks or potentially damaging viral content is essential,” Farid said.

How deepfakes undermine enterprise trust

Only a couple of years ago, deepfakes were notoriously easy to spot. The extra fingers and malformed objects of early deepfakes have given way to eerily accurate synthetic content. Thanks to cheap and widely available software, even trained experts with sophisticated forensics tools have trouble verifying the authenticity of media.

“Business leaders must think about protecting their companies,” said Andy Parsons, global head of content at Adobe.

Among the threats:

The problem is bigger than many CIOs and CISOs recognize. Financial losses to businesses due to deepfakes and AI fraud in the U.S. could reach $40 billion by 2027, up from $12.3 billion in 2023, according to Deloitte.

Already, several high-profile incidents have rocked companies. In 2024, a finance employee at Arup, a U.K.-based engineering firm, transferred $25 million during a video meeting in which every senior leader on screen was an AI-generated deepfakes. At Qantas Airlines, outside experts said that it is “highly plausible” that voice-cloning was used in 2025 to convince call-center teams to share credentials for 6 million customers.

“The post-Covid world has largely shifted to remote interactions. Video calls have become the norm,” said Matthew Moynahan, CEO of GetReal Security, a firm that authenticates and verifies digital media. “There is a growing volume of streaming video and other synthetic media coming from sources and points of origin that cannot be verified.”

Why cybersecurity tools fail against deepfakes

Combating deepfakes and other generative AI attacks starts with a security reset. “The first thing to realize is that if the harmful content is real, you have a problem and if it’s fake you have a different problem,” Farid points out. “Everything revolves around knowing what you’re dealing with.”

Modern cybersecurity tools fall short. While they excel at monitoring network traffic and detecting malware, they cannot verify whether a person on a video call — or pixels in an image — are real or fake. “These tools do not know what I look like, what I sound like, or how I’m moving around. Deepfakes completely bypass traditional controls,” Moynahan explained.

AI detection techniques alone won’t solve the problem, Farid said. He estimated that many detection tools are only about 80% effective and offer no insight into why the system detected a deepfake in the first place. False-positives and false-negatives are only part of the problem. “There’s no explainability. You can’t go into a court of law or explain to the press or public why an image or video is real or fake,” he said.

Even more daunting is the fact that a detection tool must operate in real time and connect to videoconferencing platforms like Microsoft Teams and Zoom. It isn’t enough to view a simple confidence score, said Farid, who is also co-founder and chief science officer at GetReal Security. “You need instant verifications across workflows, not a three-day forensic analysis.”

GetReal Security is one of a growing array of firms dedicated to combating synthetic content. Others include Reality Defender, Deep Media and Sensity AI. Still another group of security firms, including Hive and Pindrop, address AI-generated content moderation, voice-channel deepfakes, and fraud defense.

Effective tools are those that analyze and validate signals within media, including examining visual and acoustic cues such as lighting consistency, shadow angles and 3D geometry, along with behavioral biometrics like voice patterns, facial movements and known human characteristics. Signal manipulation and environmental cues, such as a person’s known location and IP address, also need to be analyzed.

How enterprises can defend against deepfakes

Detection alone won’t make the problem go away. Organizations require a broader defense ecosystem that spans intelligence, analysis, practices and internal safeguards. Narrative intelligence, for example, monitors external platforms for disinformation campaigns. This makes it possible to catch an attack early. Red-team exercises expose vulnerabilities, including where a spoofed voice, photo or video is likely to slip through. And multi-factor verification, using known call-back numbers and security questions that only a real CFO or CEO could answer, reduces the risk of a human judgment error.

If an attack does pierce an organization’s defenses, it’s also important to respond quickly and decisively. This includes sharing crucial facts internally and ensuring that legal, communications and marketing teams have the information they need to interact with customers, partners, the media and others. A shared playbook is vital, Ramirez said.

Digital provenance has also emerged as a valuable resource. It traces a video, audio file or photo to its origin and shows whether it was altered somewhere along the way. For example, the Coalition for Content Provenance and Authenticity (C2PA) embeds cryptographically signed metadata into content. Parsons, a member of the C2PA steering committee, likened this to a “nutrition label.”

C2PA’s content credentials are now moving through the ISO standards process. Along with digital watermarking tools like Google’s SynthID and tamper-evident logs that create append-only, cryptographically verifiable records, it is possible to produce verifiable and defendable media assets. “This doesn’t prove truth, but it does put authenticity within reach,” Parsons says. “C2PA and cryptographic techniques are an important foundation for achieving a higher level of trustworthiness.”

Although it’s possible to strip metadata from these provenance systems — and these frameworks do nothing to stop the spread of deepfakes and other synthetic content — they establish a baseline for authenticity. In addition, as more organizations adopt digital provenance tools, malicious content becomes easier to spot.

Concluded Farid: “Oftentimes, you have only a few seconds to determine whether incoming video and other content is real or fake, and there are severe consequences if you make the wrong decision.”