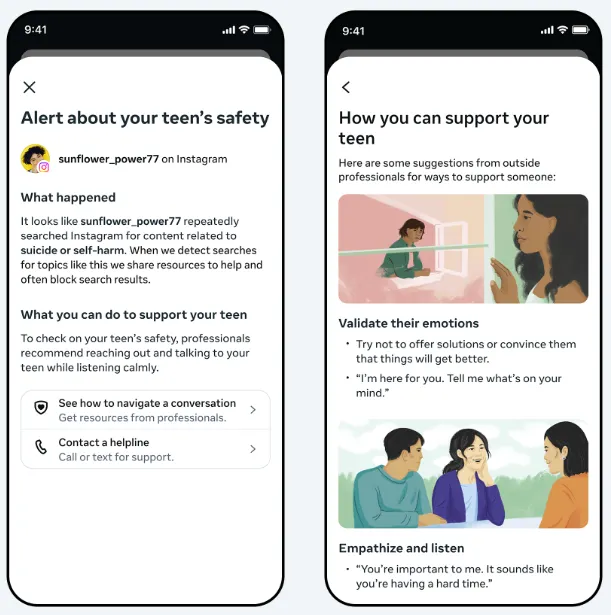

Meta has announced a new safety measure for Instagram on Thursday that will alert parents if their teenage child repeatedly searches for terms related to suicide or self-harm in the app.

The alerts, which are being rolled out to parents in the U.S., Canada, the U.K. and Australia beginning next week, will send a push notification to an approved parents’ phone. The notification will provide an overview of what happened, along with links to resources that can help parents address their concerns with their children.

Parents will need to be enrolled in Instagram’s Parental Supervision program to qualify for the alerts.

As per Meta: “We understand how sensitive these issues are, and how distressing it could be for a parent to receive an alert like this. The vast majority of teens do not try to search for suicide and self-harm content on Instagram, and when they do, our policy is to block these searches, instead directing them to resources and helplines that can offer support. These alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for this content, and to give them the resources they need to support their teen.”

Meta said it’s launching these alerts on Instagram first, but it will be looking to bring them to Meta AI as well, because teenagers are increasingly asking its artificial intelligence bot similar questions.

“While our AI is already trained to respond safely to teens and provide resources on these topics as appropriate, we’re now building similar parental alerts for certain AI experiences,” Meta said. “These will notify parents if a teen attempts to engage in certain types of conversations related to suicide or self-harm with our AI.”

The update comes as Meta faces more scrutiny over its teen protection measures, with a court case underway in California concerning allegations that Meta has pursued a strategy of growth at all costs and ignored the impact of its products on children’s mental and physical health.

The trial, in which both Meta CEO Mark Zuckerberg and Instagram chief Adam Mosseri have already faced questioning, stems from allegations that Meta was aware of teen safety concerns for years before it took action.

Meta has since implemented a range of teen safety measures, but the company could face significant penalties if it turns out that Meta delayed moving on this due to business growth considerations.

Either way, the trial is another dent in the public persona of the company, which already has a poor reputation for safety and user protection.

Meta may be hoping that its more recent efforts on teen protection, including this new announcement, could help to color that view and ensure that parents and teens feel safe and protected in its apps.