As more regions consider tougher age restrictions on social media use, Meta is looking to provide more assurance that it’s doing its part to improve its age limit enforcement.

This week, the company announced an updated, artificial intelligence-powered process to detect user age, featuring simplified reporting flows for accounts that users suspect are held by underage users, along with prompts to help parents manage their kids’ social media use.

First, Meta’s revised age-checking system now includes AI visual analysis, which will scan uploaded photos and videos for visual clues about a person’s age.

As explained by Meta: “Our AI looks at general themes and visual cues, for example height or bone structure, to estimate someone’s general age; it does not identify the specific person in the image. By combining these visual insights with our analysis of text and interactions, we can significantly increase the number of underage accounts we identify and remove.”

That’s a key point: Meta reiterated several times in its announcement that this technology is not facial recognition.

Meta has had issues with facial recognition in the past. The company had to shut down its facial recognition processes for Facebook entirely in 2021, following user backlash around the automated detection of faces in images, particularly via photo tagging.

As a result, Meta is keen to highlight that this updated process does not gather facial ID data, but is looking at more general image cues to help predict user age.

Meta said this revised process will build on its existing age detection measures, which include scanning user profiles for birthday celebrations or mentions of school grades.

“We look for these signals across various formats, like posts, comments, bios, and captions, and we’re continuing to expand this technology across additional parts of our apps like Instagram Reels, Instagram Live, and Facebook Groups,” Meta said. “If we determine an account may be underage, it will be deactivated and the account holder will need to provide proof of age through our age verification process to prevent their account from being deleted.”

In combination, these various systems should improve Meta’s age detection process and help to ensure that the company is doing what it can to keep underage users out of its apps.

Meta is also rolling out simplified reporting of accounts that users suspect might be held by underage users. The company said it will also streamline its reporting process using AI technology, and offer improved measures to detect when banned users seek to create new accounts.

In addition, Meta is implementing proactive teen account detection technology in more regions.

Over the last year, Meta has been utilizing expanded measures in some regions to detect young users who’ve lied about their age. Meta will now bring this tech to Brazil, as well as 27 more countries in the EU.

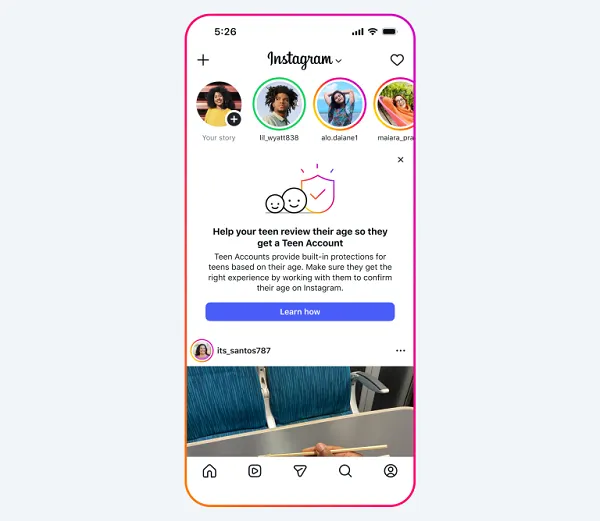

Finally, in the U.S., Meta will begin prompting parents on Facebook and Instagram with information about how to check and confirm their teens’ listed ages within each app.

These expanded measures come as Meta faces more pressure to restrict teenagers app usage amid rising support for teenage social restrictions in several markets.

Last month, the European Commission announced the preliminary findings of its investigation into the company’s efforts to keep young users out of its apps. The commission determined that Meta’s current age-checking and detection systems were inadequate and failed to meet obligations under the Digital Services Act.

Earlier this week, Meta threatened to pull its apps from the state of New Mexico, after legislators there proposed new rules that would impose tougher penalties on Meta if the company fails to keep children off its apps.

These specific cases, along with the broader push for increased teenage social media restrictions, have put Meta, in particular, under more pressure to provide solutions. These new measures could help to reassure authorities and users that the company is working to improve its systems.

Even so, no age-checking process is perfect, and no matter how many systems and tools Meta puts in place, younger, digitally native audiences will work out ways to circumvent the rules, making broader enforcement difficult.

That’s probably why Meta is hesitant to commit to stronger, legally-binding targets for keeping kids out of its apps. The company knows it can’t make guarantees.

Teen workaround tactics include everything from using a VPN to, as per a recent report from Internet Matters in the U.K., drawing moustaches on their faces to trick age detection tools.

The fact that this is even possible highlights the issues with age detection systems. Yes, they are improving, but even simple approaches like this can thwart even the most modern technological approaches.

Indeed, in Australia, which enacted its new under-16 social media ban in December, initial reports on the effectiveness of the new system indicated that the majority of kids were still accessing social media apps. Furthermore, the reports found that the bans had no significant impact, despite increased potential penalties.

No age detection measure is perfect, and as such, it’s unfair to implement tougher penalties for platforms that fail to meet increased restrictions.

But working with the platforms, and implementing a range of measures, as well as investing in digital literacy education, could lead to better outcomes than blanket bans and blunt penalties.