A tech market report on AI, cloud, software delivery, employment, Vietnam’s digital economy, and the buyer actions that matter after Q1 2026.

Written from a Designveloper researcher’s point of view.

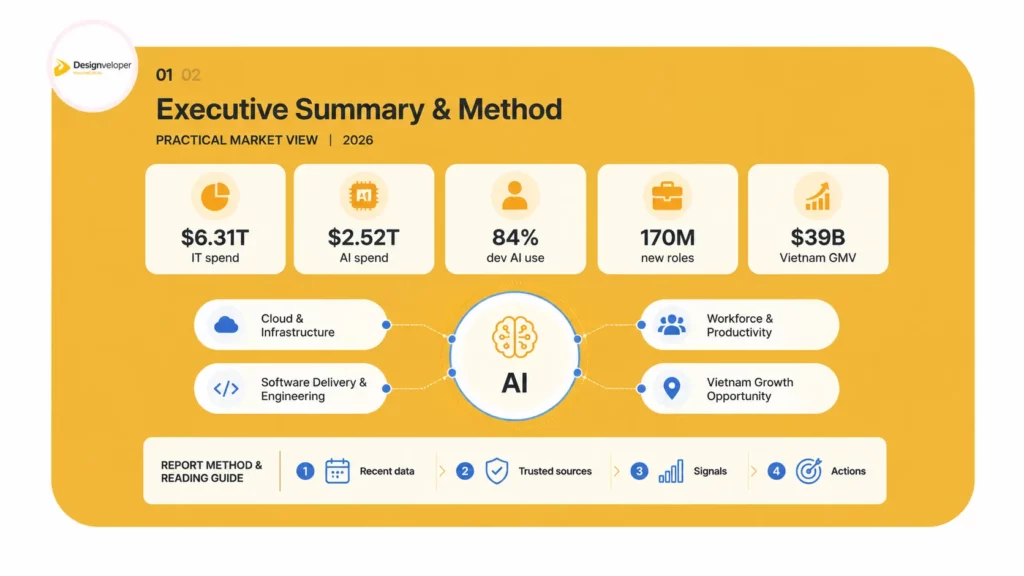

1. Executive Summary

Q1 2026 marks a clear shift in technology markets. The Q1 2026 Tech Market Report from Designveloper reads that shift in simple terms. AI is no longer a side experiment. It now changes IT budgets, cloud demand, software delivery, hiring plans, and operating models. The market has moved from interest to implementation. That makes the next phase more practical and more demanding.

The first signal comes from spending. Gartner expects worldwide IT spending to reach $6.31 trillion in 2026, up 13.5% from 2025. This is not a normal upgrade cycle. Data center systems, software, and IT services carry much of the momentum. AI infrastructure sits behind that demand. It pulls budget toward servers, memory, cloud capacity, model operations, integration, security, and managed services.

The second signal comes from AI itself. Gartner forecasts worldwide AI spending at $2.52 trillion in 2026. The same forecast shows AI infrastructure as the largest bucket. That matters because infrastructure spend creates a second-order software market. Once firms buy capacity, they need systems that can use it. They need copilots, agents, RAG systems, document workflows, analytics layers, and governance dashboards. They also need engineering teams that can connect these tools to real business processes.

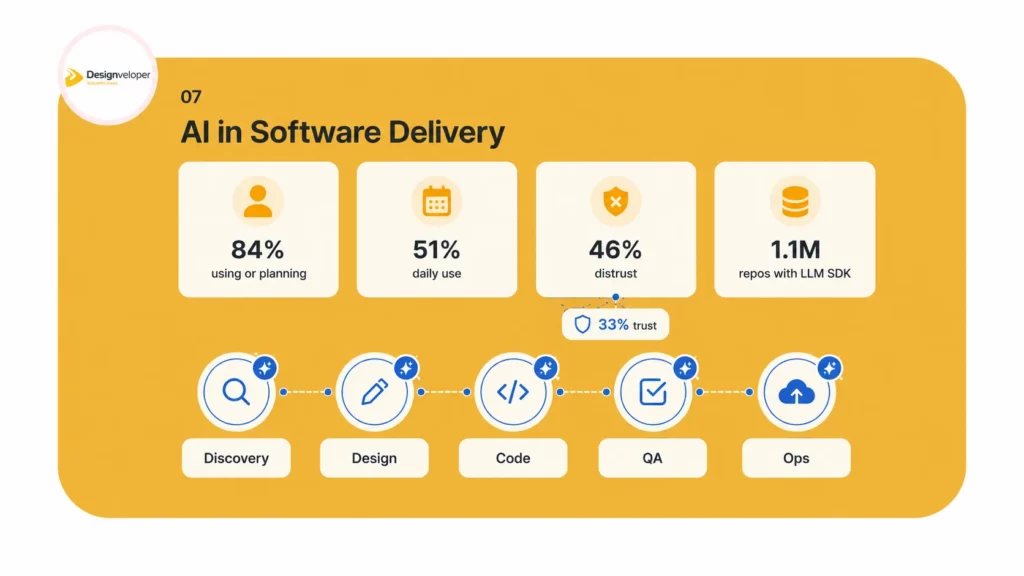

The third signal comes from developers. Stack Overflow reports that 84% of respondents are using or planning to use AI tools in the development process, while 51% of professional developers use AI tools daily. Yet trust has not kept pace. More developers distrust AI output accuracy than trust it. This creates a market contradiction. AI speeds up coding, but human review becomes more valuable, not less valuable.

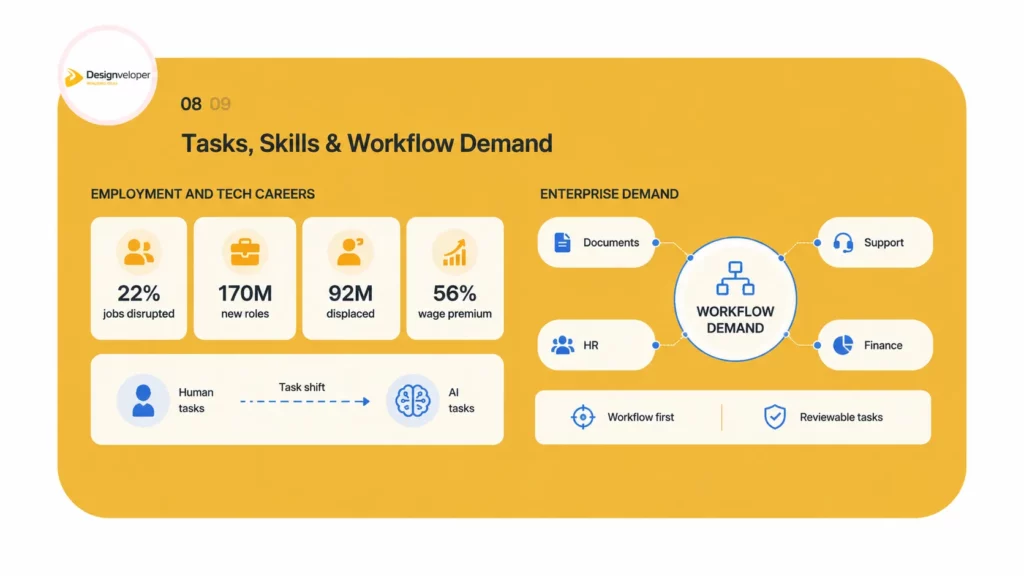

The fourth signal comes from work. The World Economic Forum expects job disruption to affect 22% of jobs by 2030. It also expects 170 million new roles and 92 million displaced roles. The net figure looks positive, but the path will feel uneven. The pressure will hit entry-level work, routine tasks, and teams without clear reskilling systems first. Companies that only cut roles may weaken their future talent pipeline. Companies that redesign workflows can gain speed and keep learning inside the organization.

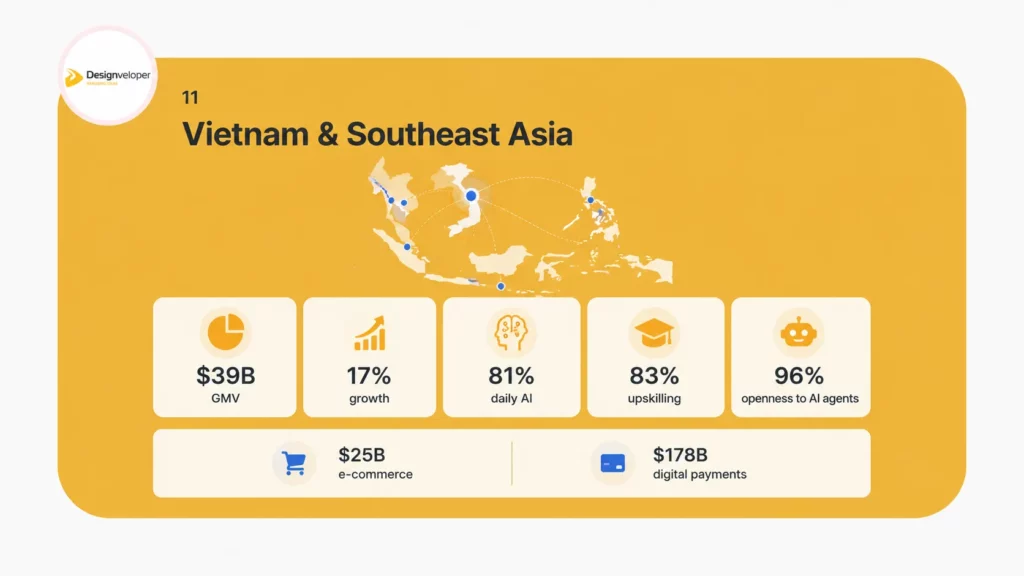

The fifth signal comes from Vietnam and Southeast Asia. Google, Temasek, and Bain expect Vietnam’s digital economy to reach $39 billion in GMV in 2025. The same Vietnam announcement says 81% of users interact with AI daily and 96% express high trust in AI agents or data sharing. This gives Vietnam a strong adoption base. It also gives software partners in Vietnam a wider role. They can help global clients build lower-friction AI workflows, web products, mobile products, and internal tools.

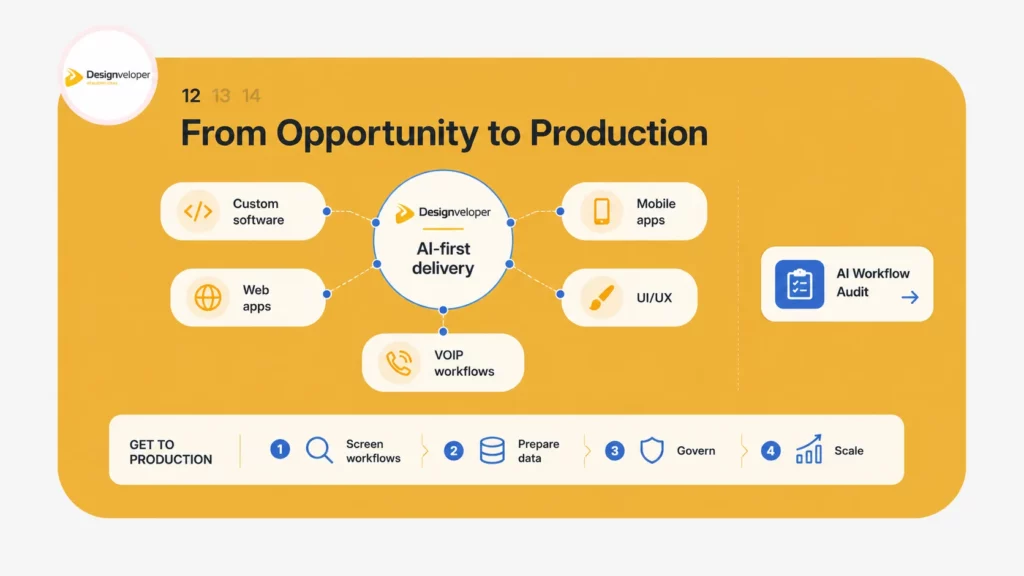

Designveloper sees the market through a delivery lens. The strongest buyers will not ask only, ‘Which model should we use?’ They will ask, ‘Which workflow should change, which data should feed it, which users should approve it, and which metric should improve?’ That is why this report frames AI as a production challenge. It connects AI engineering, product engineering, workflow design, security, and business value.

The executive conclusion is direct. The 2026 tech market rewards teams that turn AI into measurable operating systems. It does not reward generic demos. It rewards better document handling, faster software releases, stronger customer support, cleaner finance workflows, safer HR operations, and more useful internal assistants. The firms that win will use AI to remove repetitive work while strengthening human judgment. That is the practical reading of this Q1 2026 Tech Market Report.

Key reading: AI spend is rising. Cloud demand is rising. Developer adoption is rising. But trust, governance, and workflow design now decide value.

| Market signal | What changed in Q1 2026 | Designveloper reading |

|---|---|---|

| IT spending | Gartner lifted the 2026 global IT spending forecast to $6.31 trillion. | AI infrastructure and software demand are pulling budget from experiments into production systems. |

| AI spending | Gartner forecasts $2.52 trillion in worldwide AI spending in 2026. | Infrastructure is necessary, but business value comes from workflow adoption. |

| Cloud | Synergy says Q1 2026 cloud infrastructure spending reached $129 billion. | Cloud capacity has become a direct input into AI product strategy. |

| Developer work | Stack Overflow says 84% use or plan to use AI tools. | AI-assisted delivery needs review systems, tests, and architecture control. |

| Jobs and skills | WEF expects 22% job disruption by 2030. | The issue is task change, not a simple job-loss story. |

| Vietnam | Google, Temasek, and Bain expect Vietnam GMV at $39 billion. | Vietnam can serve both local AI adoption and global delivery demand. |

2. Report Method and Reading Guide

This report uses a market triangulation method. It combines spending forecasts, enterprise surveys, developer platform data, labor market reports, and Vietnam market signals. The goal is not to predict every number. The goal is to find the direction of change that buyers, product teams, and technology leaders can use now.

Designveloper treats Q1 2026 as a turning point because several independent sources now point to the same pattern. IT budgets are moving up. AI infrastructure is still absorbing large capital. Cloud demand keeps expanding. Software teams use AI more often. Workers and employers face faster skill change. Yet the same sources also show friction. AI output still needs verification. Cybersecurity teams need stronger controls. Employees may use personal AI accounts for work. Junior talent may face pressure. These details make the market more nuanced than a simple boom story.

The report uses four filters. First, it looks for recent data. Second, it gives priority to primary or high-authority sources. Third, it separates signals from claims. Fourth, it interprets each signal through Designveloper’s delivery experience as a web, software, AI, mobile, and VOIP development partner. This makes the report practical for leaders who must choose projects, allocate budget, and reduce delivery risk.

The report also follows a deductive structure. Each section starts with the main conclusion. Then it explains the data behind that conclusion. After that, it turns the data into action. This mirrors the way decision makers read tech market reports. They need the answer first. Then they need support. Then they need a path forward.

The analysis focuses on global technology buyers, software teams, operational businesses, and Vietnam-based delivery opportunities. It does not treat AI as a single product category. AI now appears inside infrastructure, software, cloud services, workflow tools, security systems, developer environments, and HR processes. That is why the report uses a cross-market lens.

The source set includes Gartner, Stanford HAI, Synergy Research Group, Stack Overflow, GitHub, World Economic Forum, PwC, LinkedIn, McKinsey, Google, Temasek, Bain, Reuters, and public Designveloper pages. The report also uses Designveloper’s internal brand direction and project proof notes where it discusses service fit and relevant experience. Internal examples support brand context; external sources support market data.

Readers should use this report as a planning document. It helps answer five questions. Where is technology spend moving? Which AI use cases deserve priority? How should software teams change delivery practices? What does AI mean for hiring and skills? Why does Vietnam matter in the 2026 technology market?

The report avoids hype. It does not claim that AI will replace all developers or solve every workflow. It also does not dismiss AI as a passing trend. The evidence supports a middle view. AI will automate parts of many tasks. It will also increase demand for product thinking, domain knowledge, data quality, security, system design, and change management.

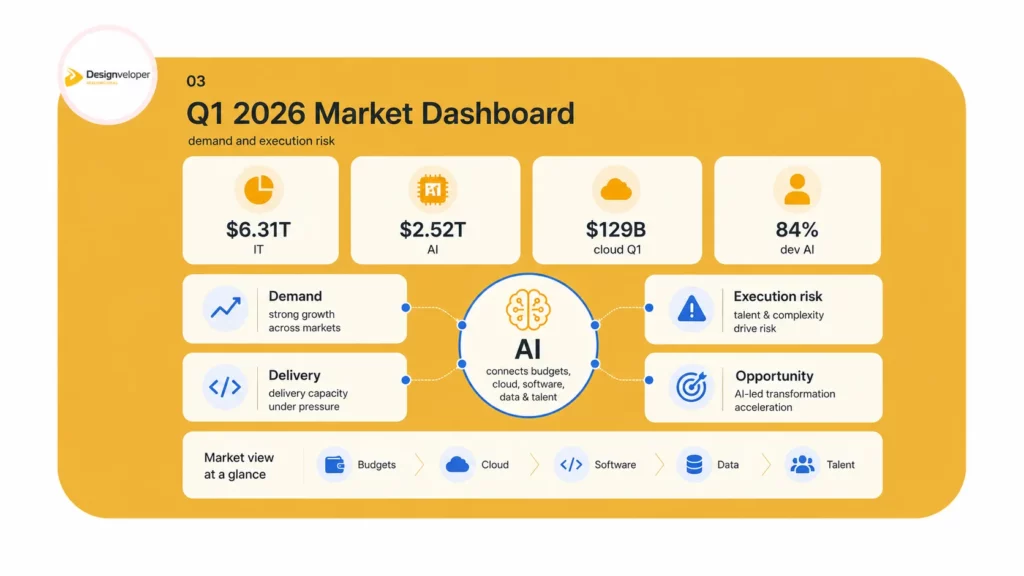

3. Q1 2026 Market Dashboard

The dashboard view is simple. Q1 2026 confirms a market with strong technology demand and rising execution risk. Demand comes from AI infrastructure, cloud services, software modernization, and workforce productivity pressure. Execution risk comes from data quality, security, trust, talent, and the gap between AI demos and production workflows.

This is why buyers should not judge the market only by vendor growth or model capability. They should judge it by whether technology changes work. Does it reduce a manual step? Does it improve a customer response? Does it shorten a release? Does it make a finance or HR process easier to approve? Does it make a developer more reliable, not just faster? These questions separate useful AI from noisy AI.

Dashboard Snapshot

| Area | Current signal | Risk if ignored | Best next move |

|---|---|---|---|

| AI budgets | AI spending expands fastest around infrastructure, services, and software. | Teams spend on tools but fail to create business impact. | Rank use cases by workflow value and data readiness. |

| Cloud | Cloud infrastructure spending keeps rising with AI workloads. | Costs rise without control or workload governance. | Build cloud cost visibility into AI roadmaps. |

| Developer work | AI tools become normal in coding, tests, docs, and support. | Poor code review increases rework and security debt. | Define AI coding rules, test coverage, and human review gates. |

| Workforce | Skills change faster than job titles. | Entry-level pipelines weaken and senior staff carry more review work. | Pair reskilling with role redesign and mentoring. |

| Cybersecurity | AI agents and personal AI use expand attack surfaces. | Sensitive data leaks or unmanaged agents create compliance risk. | Create AI inventory, access controls, and response playbooks. |

| Vietnam | Vietnam shows strong digital and AI adoption signals. | Firms miss a high-adoption market and delivery base. | Use Vietnam teams for AI-enabled software and workflow delivery. |

The most important insight is not that every number is large. The most important insight is that the numbers point toward the same operational change. AI now pushes buyers to connect strategy, product, data, cloud, software engineering, and talent. This favors companies that can manage the full path from problem definition to production deployment.

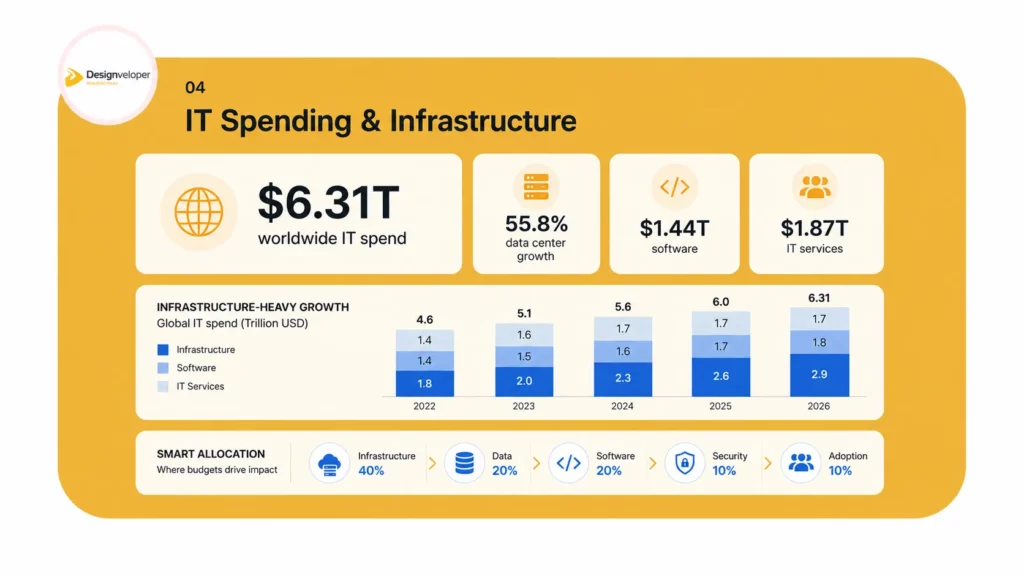

4. IT Spending: AI Turns Budgets Into Infrastructure

The first market conclusion is clear. Enterprise technology spending is expanding because AI needs a full stack. Gartner’s April 2026 forecast puts worldwide IT spending at $6.31 trillion in 2026. That number reflects more than enthusiasm for new tools. It reflects a broader buildout across data centers, software, services, devices, and communication services.

Data center systems carry the sharpest growth rate in the Gartner table, with 55.8% growth in 2026. That detail changes the reading of the market. AI does not only increase app demand. It increases demand for compute, memory, accelerators, storage, networking, power planning, and cloud architecture. It also raises the value of people who can design systems that use this infrastructure without wasting it.

Software spending also looks strong. Gartner forecasts software spending at $1.44 trillion in 2026. This matters because software is where AI leaves the data center and enters the workflow. Infrastructure creates capacity. Software creates user behavior. The most valuable software projects in 2026 will connect AI to a real decision, approval, request, code path, document, or customer touchpoint.

IT services remain the largest spending category in Gartner’s table, reaching $1.87 trillion in 2026. That reinforces a practical point. Companies do not only need tools. They need implementation. They need migration, integration, custom software, managed infrastructure, process redesign, testing, training, and support. This is where software partners become more important.

The budget pattern also creates a new question for CIOs. Should the enterprise spend first on models, cloud, software, data, or workflow redesign? The best answer depends on the use case. A document automation project may need retrieval, redaction, review flows, and access control. A coding productivity program may need repository policies, tests, code review prompts, and secure developer environments. A customer support agent may need conversation history, escalation rules, and analytics. These projects use AI, but they succeed through systems design.

This is why Designveloper reads 2026 as a system integration market. Buyers will not capture value by buying isolated AI subscriptions. They will need to map work, clean data, define roles, build software layers, and measure outcomes. The delivery partner that understands product engineering and workflow design can reduce risk because it sees more than the model call.

The market is also multi-speed. AI infrastructure and GenAI software grow faster than many traditional categories. Devices grow, but with memory cost pressure. Communication services grow more slowly. That split means technology leaders should not spread budget evenly. They should identify the few places where AI changes a workflow enough to justify spend.

A practical budget model should divide AI spend into five buckets. The first bucket is infrastructure and cloud. The second bucket is data readiness. The third bucket is software and integration. The fourth bucket is governance and security. The fifth bucket is adoption and training. Many failed pilots overfund the first bucket and underfund the next four. Q1 2026 data suggests that buyers should correct that balance.

The strongest market opportunity sits between software and services. Firms need AI-ready products, but they also need custom integrations around legacy processes. They want reusable platforms, yet their data, approvals, and operating rules remain specific. This tension favors practical engineering teams. It also favors vendors that can start small, ship safely, and scale with proof.

For Designveloper, this aligns with real service demand. Clients ask for AI-powered business software, custom software, web applications, mobile applications, and VOIP systems. Those services now sit closer together. A voice-enabled support workflow may need AI summarization. A mobile finance app may need OCR and personal assistant actions. A web app may need AI search, smart approvals, or data extraction. The market no longer separates software delivery from AI capability as cleanly as before.

What This Means for Buyers

- Treat AI as a portfolio of workflow improvements, not a single tool purchase.

- Budget for data preparation, integration, security, monitoring, and change management from the start.

- Prioritize systems that remove a measurable bottleneck in customer service, operations, finance, HR, compliance, or product delivery.

- Avoid tools that create new manual review work without reducing old manual work.

- Ask each vendor to show how the solution will run in production, not only how the demo works.

The market signal is strong, but the buyer discipline must also rise. Larger budgets do not guarantee stronger outcomes. Clear workflow design does.

5. AI Spending: Infrastructure Leads, but Software Converts Value

The second market conclusion is direct. AI spending is large, but the value chain is uneven. Gartner forecasts worldwide AI spending to total $2.52 trillion in 2026. AI infrastructure takes the largest share. AI services and AI software follow. This mix shows that the market still builds the foundation while trying to prove business value on top of it.

Gartner also says AI infrastructure will reach $1.37 trillion in 2026. That figure includes the hardware and systems needed to run AI at scale. It reflects the price of compute, high-bandwidth memory, servers, accelerators, and surrounding platform demand. This infrastructure wave explains why data centers and cloud providers dominate market conversations.

Yet infrastructure alone does not change a company. A company changes when AI enters work. That is why AI services and AI software matter. Gartner lists AI services at $588.65 billion in 2026 and AI software at $452.46 billion in 2026. These categories translate infrastructure into implementation, user experience, integration, and support. They are closer to business outcomes.

The practical lesson is simple. Companies should not ask, ‘How much AI are we buying?’ They should ask, ‘How much work are we improving?’ The right unit of analysis is the workflow. A workflow contains users, data, rules, approvals, exceptions, integrations, and metrics. AI can improve parts of it, but only if the full system supports the change.

A document workflow gives a clear example. A generic chatbot may answer questions. A production document workflow must upload files, classify pages, extract fields, summarize terms, highlight risk, support redaction, record approvals, and respect access rules. The model is one component. The software system makes it useful.

A developer workflow gives another example. An AI assistant may generate code. A production software team needs branch rules, code review, static analysis, tests, secret scanning, dependency checks, architecture guidelines, and release notes. AI output moves faster than human output. That makes quality gates more important, not less important.

A customer support workflow shows the same pattern. AI can summarize calls, suggest replies, classify tickets, and route issues. But a good system must respect customer context, channel history, escalation rules, compliance obligations, and human handoff points. When those layers are missing, AI may create confident but wrong answers. That harms trust.

The market therefore moves toward applied AI. Applied AI does not mean every process needs an agent. It means every process should be checked for repeatable judgment, high-volume text, slow handoffs, repetitive approvals, or data extraction. Those points can create value. The best candidates also have clear data sources and human review points.

Designveloper’s role fits this middle layer. The company builds custom AI systems, intelligent workflows, and digital products. That combination matters because most clients need more than a prompt. They need UI design, backend systems, cloud deployment, mobile access, communication flows, security, and maintainable code. They also need a partner that can turn AI ambition into production software.

Q1 2026 also shows a maturing buyer. Buyers now ask for ROI, safety, workflow fit, and adoption plans. They have seen prototypes. They now want durable systems. This maturity is healthy. It shifts attention from hype to delivery quality. It also creates a stronger market for engineering partners that can talk about data, users, code, security, and process in one conversation.

AI Spending Interpretation

| AI spend bucket | What it buys | Common mistake | Better approach |

|---|---|---|---|

| Infrastructure | Compute, servers, chips, data center capacity, and cloud capacity. | Treating capacity as the outcome. | Tie capacity to prioritized workloads and cost controls. |

| Services | Implementation, integration, consulting, migration, managed operations, and support. | Using services only after pilots fail. | Bring delivery design into the first roadmap. |

| Software | Apps, copilots, agents, analytics layers, and workflow tools. | Buying isolated subscriptions. | Connect software to approvals, data, roles, and metrics. |

| Cybersecurity | Controls, monitoring, identity, privacy, and security operations. | Adding security late. | Design security into data access and agent behavior. |

| Models and platforms | Model access, data science tools, ML platforms, and app development platforms. | Choosing tools before use cases. | Choose platforms after workflow and data assessment. |

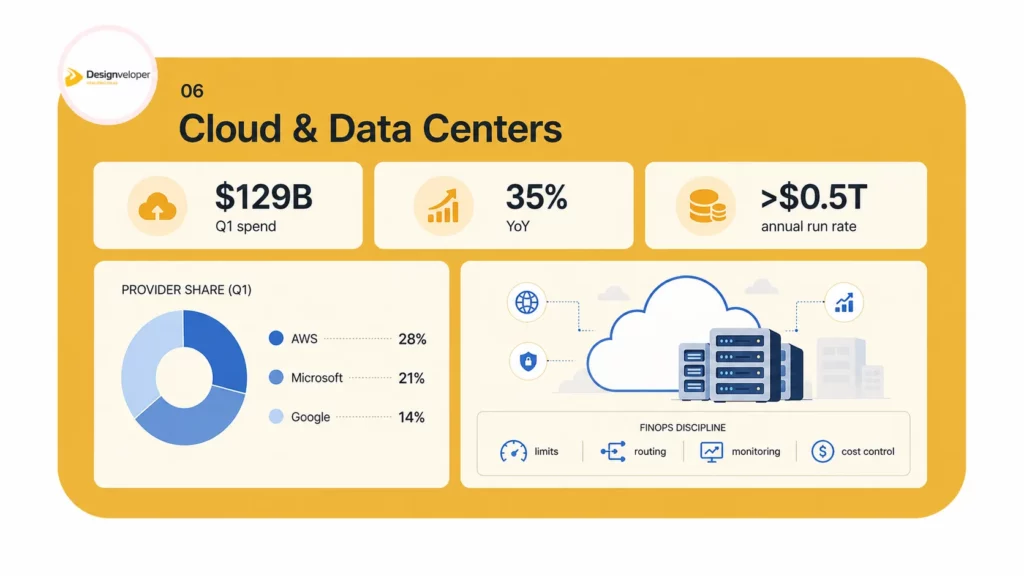

6. Cloud and Data Centers: Capacity Becomes Strategy

The third market conclusion is that cloud capacity has become a strategic constraint. Synergy Research Group says Q1 enterprise spending on cloud infrastructure services reached $129 billion. It also says year-on-year growth reached 35%. This shows that AI demand did not replace cloud demand. It intensified it.

The same source reports that cloud infrastructure services now run at an annual revenue rate of more than half a trillion dollars. That figure matters because cloud is not only a storage or hosting category anymore. It is the operating layer for data products, AI inference, application modernization, analytics, and global software delivery.

Synergy also reports Q1 worldwide market shares of 28% for Amazon, 21% for Microsoft, and 14% for Google. The top providers still lead, but the cloud market also now includes neoclouds and AI-focused providers. This creates more choice and more complexity for buyers.

Cloud planning in 2026 requires two views. The first view is platform selection. Buyers must choose between major cloud providers, multi-cloud, regional providers, or specialized AI infrastructure. The second view is workload design. Buyers must decide where to run training, inference, data processing, document search, streaming, analytics, and mobile backends. Both views affect cost and resilience.

AI workloads raise cost questions faster than traditional web workloads. A user-facing agent may call a model many times in one conversation. A document pipeline may run OCR, embedding, retrieval, summarization, and redaction. A code assistant may connect to repositories and issue trackers. These workloads can create unpredictable usage. Without monitoring, they can surprise finance teams.

Cloud architecture should therefore include FinOps from day one. The project should define workload limits, model routing, caching, token budgets, queue design, fallback models, and usage dashboards. These controls protect both cost and user experience. They also help teams compare model quality against spend.

Security also changes. AI workflows often touch sensitive documents, private code, support transcripts, HR requests, invoice data, and customer records. This means cloud architecture must include identity, encryption, logging, retention rules, and data residency decisions. The fastest route to production can become expensive if compliance work starts late.

Designveloper’s delivery experience points to a practical pattern. Start with a narrow workflow. Build the minimum data pipeline. Create a secure interface. Add review checkpoints. Measure speed, cost, and accuracy. Then expand. This pattern avoids overbuilding infrastructure before the team proves business value.

Vietnam and Southeast Asia also matter in this layer. Regional demand keeps rising. Reuters reports that Southeast Asia’s data center capacity could grow 2.8 times, based on the Google, Temasek, and Bain report. That capacity buildout supports cloud, AI, and digital services. It also makes regional architecture choices more important for latency, cost, and compliance.

Cloud is now a market signal and a design problem. It signals demand because spending keeps rising. It becomes a design problem because every AI workflow must run somewhere, store something, and expose risk somewhere. The strongest teams treat cloud as part of product strategy, not just infrastructure procurement.

Designveloper Interpretation for Cloud-Backed AI

A practical AI product roadmap should answer six cloud questions before development begins. Where does data live? Which systems can access it? Which model runs each task? How will usage cost be tracked? What happens when the model fails? How will the system log and audit decisions? These questions keep teams from moving fast in the wrong direction.

- Use managed cloud services when speed and reliability matter more than custom infrastructure.

- Use private or hybrid patterns when documents, code, health data, finance data, or regulated data need tighter controls.

- Use model routing when the workflow has different quality and cost needs by task.

- Use monitoring to track latency, failure rate, token cost, hallucination risk, and user corrections.

- Use human approval points for high-impact decisions.

7. Software Development: AI Changes the Delivery System

The fourth market conclusion is that AI has become normal in software development, but the production bar has gone up. Stack Overflow reports that 84% of respondents are using or planning to use AI tools in development. It also reports that 51% of professional developers use AI tools daily. AI is now part of the workbench.

Yet the same source shows friction. Stack Overflow says more developers actively distrust the accuracy of AI tools than trust it, at 46% versus 33%. This gap is the core software delivery story. Teams will use AI because it helps produce drafts, tests, explanations, and boilerplate. Teams will also need stronger review because the output can look correct while hiding errors.

GitHub’s Octoverse 2025 adds another signal. GitHub says more than 180 million developers now build on the platform. It also says developers created more than 230 new repositories every minute and pushed nearly 1 billion commits in 2025. The platform also reports that 80% of new developers on GitHub use Copilot within their first week. AI-assisted development is no longer an edge case.

GitHub also reports that more than 1.1 million public repositories now use an LLM SDK. This shows that developers are not only using AI to write code. They are building AI into products. That expands demand for AI application architecture, evaluation systems, observability, prompt management, retrieval, security, and model cost control.

The change affects every stage of software delivery. During discovery, AI can summarize user research and draft requirements. During design, it can produce UI variations and test copy. During coding, it can scaffold components, endpoints, tests, migrations, and documentation. During QA, it can propose test cases and explain bugs. During operations, it can summarize incidents and support runbooks. These benefits are real.

The risks are also real. AI may invent APIs, miss edge cases, overfit to examples, ignore security rules, produce fragile tests, or create code that works locally but fails at scale. It may also generate code with licensing or dependency risks. A mature team therefore treats AI output as a draft. It does not treat AI output as shipped work.

This changes the developer skill mix. Developers need to write prompts, but that is not enough. They need to evaluate code, understand architecture, test behavior, secure systems, and explain tradeoffs. Senior developers may spend more time reviewing and designing. Junior developers may get less repetitive starter work. That creates a training problem if companies do not build new learning paths.

Designveloper’s view is practical. AI can speed development when teams already have clear standards. It can slow development when teams lack standards. The difference comes from the delivery system around the tool. Teams need coding guidelines, review checklists, secure defaults, reusable architecture, CI/CD gates, and clear acceptance criteria.

AI also increases the value of product context. A generic code answer may solve the visible problem. A product-aware engineer asks whether the answer fits the business rule, database schema, API contract, latency target, accessibility need, and long-term roadmap. That kind of judgment remains human-led.

For buyers, this means a vendor’s AI use should not be judged by speed claims alone. Buyers should ask how the vendor reviews AI-assisted code, how it tests generated code, how it protects client data, how it handles security, and how it documents decisions. Faster output has value only when quality and maintainability stay intact.

Software Delivery Model for AI-Assisted Teams

| Delivery layer | AI can help with | Human control must cover |

|---|---|---|

| Discovery | Summaries, competitor notes, user story drafts, and workflow mapping. | User intent, scope, business rules, and stakeholder alignment. |

| Design | Wireframe ideas, microcopy drafts, variants, and accessibility checks. | Usability, brand fit, user research, and edge cases. |

| Engineering | Boilerplate, components, tests, queries, scripts, and explanations. | Architecture, security, performance, integration, and maintainability. |

| QA | Test ideas, bug reproduction steps, and automated test drafts. | Coverage quality, risk priority, regression strategy, and release approval. |

| Operations | Incident summaries, logs interpretation, runbooks, and support replies. | Root-cause analysis, customer impact, escalation, and compliance. |

The best use of AI in software development is not blind generation. It is guided acceleration. A team sets standards first. Then AI helps the team move within those standards.

8. Employment and Tech Careers: The Task Mix Shifts

The fifth market conclusion is that AI changes tasks before it changes job titles. The World Economic Forum expects job disruption to affect 22% of jobs by 2030. It expects 170 million new roles and 92 million displaced roles. The net effect may look positive, but the transition will not feel smooth for every worker or company.

Skills pressure already appears. The same WEF release says the skills gap is the most significant barrier to business transformation, cited by 63% of employers. It also says nearly 40% of skills required on the job will change. This explains why hiring alone cannot solve the problem. Companies need reskilling, role redesign, and better internal learning systems.

LinkedIn also points to fast skill change. It says that from 2015 to 2030, 70% of the skills used in most jobs will change. It identifies AI literacy as one of the fastest-growing skills across regions and job functions. This does not mean everyone must become an AI engineer. It means many workers must learn how AI changes their specific work.

PwC’s 2025 Global AI Jobs Barometer adds a wage signal. It reports that AI-skilled workers see an average 56% wage premium. It also reports that industries most exposed to AI saw 3x higher growth in revenue per employee than less exposed industries. This suggests that AI skills increasingly carry labor market value, especially when workers combine them with domain knowledge.

Stanford’s 2026 AI Index adds a cautionary point. Its takeaways say employment among U.S. software developers aged 22 to 25 has fallen nearly 20% since 2024, while older colleagues’ headcount grows. This does not prove AI alone caused the decline. Interest rates, overhiring, layoffs, outsourcing, and macro cycles also matter. Still, it supports a clear concern. Early career pathways may become narrower if companies automate junior tasks without replacing them with structured learning.

This is one of the most important human questions in the market. Many teams trained juniors through repetitive tasks. These included simple bug fixes, basic components, documentation updates, QA scripts, data cleanup, and support triage. AI can now assist with many of these tasks. If companies remove them without designing new learning loops, junior workers lose practice. Senior workers then carry more review work. The organization may gain speed in the short run and lose capability later.

A better model is task redesign. Companies should decide which tasks AI should draft, which tasks humans should review, and which tasks humans should own. They should also build junior learning around evaluation, testing, user context, debugging, and safe tool use. This gives early career workers meaningful work while still using AI to reduce low-value repetition.

The same idea applies outside software roles. HR teams can use AI to summarize policies and automate leave requests. Finance teams can use AI to extract invoice data and categorize transactions. Customer service teams can use AI to summarize conversations and propose replies. Marketing teams can use AI to draft and repurpose content. Each case needs human judgment, approval, and quality control.

Career value in 2026 will come from AI-plus profiles. A developer with AI plus security becomes more valuable. A product manager with AI plus analytics becomes more valuable. A recruiter with AI plus talent strategy becomes more valuable. A support lead with AI plus process design becomes more valuable. The plus part matters because generic AI literacy will become common.

For employers, the conclusion is direct. Do not only buy AI tools. Build AI operating skills. Create playbooks. Set review standards. Train teams on when not to use AI. Give managers ways to measure task-level improvement. Then link skill plans to actual workflows. That is how AI supports people instead of only pressuring people.

Career Implications for Technology Roles

| Role family | Task pressure | Value moves toward |

|---|---|---|

| Junior developers | Routine scaffolding, simple fixes, documentation, and basic tests. | Debugging, code reading, review skills, system thinking, and secure tool use. |

| Senior developers | Less time on boilerplate, more AI-generated code to review. | Architecture, governance, mentoring, quality systems, and release risk. |

| QA engineers | AI can draft test cases and summarize defects. | Risk-based testing, automation strategy, data setup, and edge-case thinking. |

| Product managers | AI can summarize feedback and draft requirements. | Prioritization, user research, business judgment, and cross-team decisions. |

| Security teams | AI can triage alerts and explain risks. | Identity, data policy, model threat design, and response playbooks. |

| Operations teams | AI can route requests and create summaries. | Process redesign, exception handling, SLA management, and change adoption. |

9. Enterprise Demand: Workflows Beat Generic AI Demos

The sixth market conclusion is that enterprise AI demand is becoming workflow-specific. Buyers have seen enough chatbot demos. They now ask for systems that reduce handoffs, shorten approvals, improve support quality, extract data, or increase delivery speed. This changes the vendor conversation. The best question is no longer, ‘Can AI do this?’ The best question is, ‘Can this workflow run better with AI, data, software, and people together?’

McKinsey’s Global Tech Agenda 2026 supports this shift. It says top CIOs are weaving AI and data into operating models to build intelligence-driven enterprises. The survey includes 632 participants from C-level and IT roles across industries. The main message aligns with this report. Technology leaders now shape enterprise strategy, not only infrastructure.

Enterprise AI use cases tend to cluster around repeated knowledge work. Documents, tickets, chats, invoices, policies, calls, product records, and software repositories all contain high-volume text or structured data. They also contain business rules. That makes them strong candidates for AI-assisted workflows, but only when the company can control data access and review outputs.

Document intelligence remains one of the clearest near-term areas. Many teams still read contracts, policies, receipts, onboarding papers, invoices, claims, forms, and internal documents by hand. AI can extract fields, summarize terms, compare versions, classify risk, translate text, and redact sensitive data. The value comes from reducing manual review time while preserving human approval.

Customer support also has strong demand. Support teams handle high message volume and repeated questions. AI can summarize conversations, draft responses, route issues, detect sentiment, and suggest knowledge base articles. But the system must avoid over-automation. A frustrated customer needs empathy, context, and escalation. The workflow should support the agent, not hide the human.

HR automation is another practical area. HR teams receive many repetitive requests. Employees ask about leave, booking, policies, approvals, benefits, onboarding, and internal rules. AI assistants can answer routine questions and start workflows. Yet HR data is sensitive. The system must protect privacy, log approvals, and route exceptions to human owners.

Finance and accounting workflows also fit. Teams process invoices, receipts, transaction descriptions, reimbursements, and tax-related categories. AI can extract data and suggest classifications. It can also flag missing fields or anomalies. But finance teams need audit trails. They need to see who approved what and when. This makes workflow software just as important as model quality.

Product content and commerce operations offer another example. Teams may need to import product records from images, clean backgrounds, generate titles, and summarize customer conversations. AI can reduce repetitive content work. But the team still needs style rules, accuracy checks, marketplace constraints, and human review for high-value listings.

The common pattern is clear. High-value AI use cases share four traits. They involve repeated tasks. They have accessible data. They allow review. They connect to a business metric. If a use case lacks these traits, it may still be interesting, but it may not deserve first priority.

Designveloper’s service fit sits exactly here. The company can combine AI engineering, workflow automation, web development, mobile development, VOIP, UI/UX, and custom software delivery. That mix helps when a client needs to turn a use case into a working system. A working system needs interfaces, integrations, data pipelines, permissions, monitoring, and support. AI alone does not supply those pieces.

This is also where Designveloper can add more than implementation. A delivery partner can help clients choose use cases, map steps, create pilot criteria, design user roles, and build a roadmap. That reduces the risk of building a clever demo that nobody uses. It also helps clients prepare for scale before the first release expands.

The market will reward the teams that ask narrow questions first. Which document type creates the most delay? Which approval takes the longest? Which support question repeats most often? Which internal request consumes the most HR time? Which customer journey breaks because data sits in another system? These questions lead to practical AI projects.

Priority Workflow Map

| Workflow | Typical AI functions | Production requirements | Business metric |

|---|---|---|---|

| Document review | OCR, extraction, search, summarization, redaction, translation. | Role-based access, review queues, version control, audit trail. | Review time, error rate, approval speed. |

| Customer support | Conversation summary, routing, draft replies, sentiment, knowledge retrieval. | Human handoff, customer history, escalation logic, QA sampling. | First response time, resolution time, CSAT. |

| HR operations | Policy lookup, leave requests, booking, approvals, onboarding answers. | Privacy controls, identity, approval records, escalation flow. | HR ticket volume, employee response time. |

| Finance back office | Invoice extraction, autofill, categorization, anomaly flags. | Audit trail, approval checkpoints, finance system integration. | Processing time, correction rate, compliance quality. |

| Software delivery | Code drafts, test drafts, docs, review suggestions, incident summaries. | CI/CD gates, security scanning, review standards, repo rules. | Lead time, defect rate, review load. |

| Commerce operations | Image cleanup, product title generation, description drafts, support summaries. | Style rules, marketplace checks, human approval, asset management. | Listing speed, conversion quality, support workload. |

10. Cybersecurity and Governance: Agent Risk Becomes Real

The seventh market conclusion is that AI governance moves from policy into daily operations. Gartner’s 2026 cybersecurity trends list names agentic AI oversight as a top trend. It warns that employees and developers can use agentic AI in ways that create new attack surfaces, including unmanaged agents, unsecured code, and compliance violations. This makes governance part of product design, not only a legal document.

Gartner also reports a workplace behavior risk. In a survey of 175 employees, more than 57% use personal GenAI accounts for work and 33% admit inputting sensitive information into unapproved tools. This is one of the clearest operational warnings for 2026. Employees will use AI when it helps. If the company does not give safe options, they may use unsafe ones.

AI agents create a different risk profile from normal applications. A normal app usually waits for user actions. An agent may plan steps, call tools, read files, write data, send messages, or trigger workflows. That increases the importance of identity, permissions, logging, data boundaries, and approval thresholds.

The security model should cover both sanctioned and unsanctioned AI. Sanctioned AI includes tools the company approves and monitors. Unsanctioned AI includes personal accounts, browser extensions, copy-pasted prompts, informal scripts, and shadow automations. The second group can grow fast because employees want speed. Security teams need visibility before they can create control.

A practical AI governance program begins with inventory. Which teams use AI? Which tools do they use? What data enters those tools? Which outputs affect customers, code, finance, HR, or compliance? Which workflows allow an AI system to take action? These questions define the risk surface.

The next layer is access. AI agents should not inherit broad human permissions by default. They need scoped identities. They need tool-level permissions. They need logs that show what data they accessed and what actions they took. They need approval gates for high-risk actions. This reduces blast radius.

The third layer is data policy. Sensitive data should not move into unapproved systems. Teams need rules for customer data, employee data, source code, credentials, financial records, contracts, and intellectual property. These rules should appear inside tools and workflows. Policy documents alone do not stop risky copy-paste behavior.

The fourth layer is evaluation. AI systems should be tested against expected outputs, edge cases, jailbreak attempts, and domain-specific failures. For example, a finance assistant should not invent tax categories. A medical assistant should not give unsafe advice. A support assistant should not expose another customer’s information. A code assistant should not introduce secrets or insecure dependencies.

The fifth layer is incident response. Companies need to know what to do when an AI tool leaks data, triggers the wrong workflow, produces harmful output, or gets misused. That playbook should include owners, logs, containment steps, customer communication, legal review, and model or prompt rollback.

Designveloper’s delivery stance is governance-by-design. A production-ready AI system should include controls from the first architecture discussion. This keeps governance from slowing the project late. It also gives business teams confidence to adopt the system because risks are visible and managed.

This is especially important for sectors with sensitive data. Finance, healthcare, legal, education, HR, and B2B SaaS teams need careful access and audit design. They can still use AI. But they should build AI inside clear boundaries. Those boundaries make adoption safer.

The market implication is strong. Cybersecurity and AI governance will become buying criteria. Buyers will ask vendors how they handle data isolation, encryption, access control, logs, evaluation, model providers, and human review. Vendors that cannot answer these questions will look risky, even if their demos look impressive.

Minimum AI Governance Checklist

- Create an AI tool inventory and classify sanctioned and unsanctioned use.

- Map data classes and forbid sensitive data in unapproved tools.

- Use scoped identities for AI agents and tool calls.

- Log prompts, tool actions, data access, and approvals where appropriate.

- Use human review for high-impact outputs and external communications.

- Test systems against edge cases, jailbreaks, policy violations, and hallucination risk.

- Create an AI incident response playbook.

- Review vendor contracts for retention, training, security, and data residency terms.

11. Vietnam and Southeast Asia: A Stronger Digital Delivery Hub

The eighth market conclusion is that Vietnam enters 2026 with a stronger digital and AI story. Google, Temasek, and Bain expect Vietnam’s digital economy to reach $39 billion in GMV in 2025, with 17% year-on-year growth. That gives Vietnam one of the strongest digital growth profiles in Southeast Asia.

The same announcement says Vietnam leads Southeast Asia on several AI readiness indicators. It reports that 81% of users interact with AI daily, 83% take part in AI learning or upskilling activities, and 96% are willing to share data access with AI agents. These figures show high consumer openness to AI. They also suggest a market where AI-enabled products can reach users with less cultural resistance.

The Vietnam digital economy also shows sector depth. E-commerce remains the largest contributor, and the announcement forecasts that e-commerce will exceed $25 billion in 2025. Digital financial services also matter. Google, Temasek, and Bain forecast digital payment transaction value at $178 billion in 2025. These are strong signals for web, mobile, payments, commerce, and AI-assisted service workflows.

Vietnam’s AI startup base is also forming. The announcement says Vietnam has more than 40 active AI startups and recorded $123 million in private AI funding over the past year. This does not make Vietnam the largest AI funding market in the region, but it shows momentum. It also gives software companies more local talent, partners, and use case knowledge.

Southeast Asia’s wider funding picture is mixed. Reuters reports that private funding for the region’s internet economy grew 15% year over year to $7.7 billion in the 12 months to June 2025, but remained far below the 2021 peak. The same report says AI-related investments made up 32% of private funding in the first half of 2025. This creates a selective market. Capital is cautious, but AI remains a bright spot.

Foreign technology investment adds another signal. Reuters reported that SAP would invest 150 million euros in a Vietnam R&D center over five years, with SAP Labs Vietnam located in Ho Chi Minh City and targeting 500 employees by 2027. This supports the view that Vietnam is gaining importance as a technology talent and engineering location.

AI infrastructure investment also matters. Reuters previously reported that FPT planned a $200 million AI factory using Nvidia chips and software. This kind of investment helps position Vietnam in AI research, digital infrastructure, and high-value software services. It also signals confidence from large technology ecosystems.

For global buyers, Vietnam offers a useful combination. It has strong software delivery capacity, growing AI adoption, competitive development economics, and a young digital market. It also has proximity to wider Asia-Pacific demand. This does not mean every project should move to Vietnam. It means Vietnam should be on the shortlist for AI-enabled software, workflow automation, web apps, mobile apps, SaaS products, and product engineering.

For Vietnamese software companies, the market opportunity is clear. They can move beyond outsourcing tasks and into AI-first delivery partnerships. That requires stronger consulting, security, product design, data engineering, and workflow knowledge. It also requires a clear brand position. Clients need partners who can explain how AI becomes software, not just say they use AI.

Designveloper is well placed for this shift. The company is based in Ho Chi Minh City and presents itself publicly as a software development company formed in 2013. Its service lines include web, mobile, UI/UX, VOIP, custom software, and AI development. Those capabilities match the 2026 demand pattern because clients want AI inside products and operations, not as a separate experiment.

Vietnam’s next challenge will be trust. High adoption helps, but production AI needs quality, privacy, security, and reliable delivery. Firms that build those capabilities can compete for more strategic work. Firms that sell only low-cost coding may face pressure as AI automates simpler tasks. The move up the value chain is now necessary.

The Designveloper reading is optimistic but grounded. Vietnam can become a stronger digital delivery hub in 2026. It can serve domestic companies that want AI workflows and international companies that want engineering partners. But the value will come from production-ready systems, not from broad claims about AI transformation.

Vietnam Market Opportunity Map

| Opportunity area | Why it matters | Likely buyer need |

|---|---|---|

| AI-enabled commerce | E-commerce and video commerce keep expanding. | Product content automation, support automation, recommendation UX, mobile experiences. |

| Digital finance | Digital payments and finance workflows grow fast. | OCR, invoice extraction, transaction categorization, secure mobile flows. |

| Enterprise workflow automation | Businesses face repetitive internal operations. | HR assistants, document review, approvals, support knowledge, internal tools. |

| Product engineering for global firms | Vietnam gains credibility as a development hub. | MVPs, SaaS products, modernization, AI feature integration. |

| Cloud and data engineering | AI workloads need stronger infrastructure and data layers. | RAG, data pipelines, observability, cost controls, security design. |

12. Designveloper Market View and Service Fit

Designveloper reads the Q1 2026 market through one core idea. AI must work inside real products and operations. This matches the company’s public position as a software development company in Ho Chi Minh City, formed in 2013, and its growing AI-first service direction. It also matches the market data in this report. Budgets are rising, but buyers now need proof, integration, and production quality.

Public profile data also supports Designveloper’s delivery credibility. DesignRush lists Designveloper with 13+ years of experience, 20+ industries served, 50+ advanced technologies applied, and 100+ completed projects. These figures matter because AI buyers want partners that understand software delivery beyond a single trend cycle.

Designveloper’s service stack covers several areas that fit 2026 demand. Its public service pages include custom software development, web app development, mobile app development, UI/UX design, and VOIP app development. Its AI service page presents AI software development for tailored business needs. Together, these capabilities match the market shift toward AI-enabled applications, assistants, automation, and voice-enabled workflows.

The strongest role for Designveloper is not to present AI as magic. It is to present AI as a production layer inside software. That means translating a client problem into a workflow map, selecting the right data sources, designing the user interface, building the backend, integrating AI services, adding security controls, and measuring outcomes. This is practical work. It is also where many AI projects fail when they stay at the demo level.

Designveloper’s internal proof examples show this direction. Song Nhi demonstrates an AI financial assistant with OCR-based transaction extraction, chatbot actions, auto-tagging, account descriptions, personalized responses, and multi-model fallback. Lumin demonstrates document intelligence through in-document chat, summarization, agreement generation, smart redaction, and translation. Aha shows commerce operations through batch product import from low-quality images, background cleanup, AI-generated titles and descriptions, support conversation summaries, and AI coding acceleration.

Other examples extend the pattern. Lodg shows finance and accounting support through invoice extraction, autofill, transaction categorization, and tax-related classification support. An internal HRM assistant shows employee self-service through booking, leave requests, approvals, and policy lookup inside Mattermost. These examples matter because they tie AI to workflows. They are not abstract demos.

The market message for Designveloper should therefore stay focused. We help teams turn AI opportunities into production-ready software and business workflows. That line connects to buyer needs because many companies still sit between interest and implementation. They know AI matters. They need help making it safe, useful, and measurable.

The buyer groups are also clear. Product teams need to add AI without slowing roadmap, quality, or release speed. Operational businesses need to reduce repetitive work and shorten handoffs. Internal teams with high-volume workflows need document, approval, support, HR, or call-flow automation. Each group needs a different sales path, but the delivery foundation is similar.

Designveloper should continue to show concrete workflows in content. A tech market report like this should not only describe AI spending. It should show what a buyer can do next. For example, a fintech buyer may need AI transaction categorization. A legal-tech buyer may need document chat and redaction. A commerce buyer may need product content automation. An HR buyer may need policy lookup and approval automation. A SaaS buyer may need AI features that fit current product architecture.

The company should also speak carefully about AI and employment. The market does not need fear-based messaging. It needs guidance. Designveloper can explain that AI compresses routine tasks while increasing the value of architecture, quality, security, data, and product judgment. This view supports buyers and workers. It also reflects the company’s role as a builder, not a hype vendor.

The 2026 market will reward proof. Designveloper can strengthen proof by publishing more case studies that name the workflow, the AI function, the software layer, and the operational result. Numbers help when available, but clarity also matters. A reader should understand what changed before and after the solution.

The service opportunity is broad. It includes AI workflow audits, custom AI system development, RAG systems, AI agents, document intelligence, HR automation, customer support automation, mobile AI experiences, web application modernization, VOIP-enabled AI workflows, DevOps support, and secure cloud deployment. The best offers will start with a business problem and end with a measurable workflow outcome.

Recommended Designveloper Offer Positioning

| Offer | Best-fit buyer | Message |

|---|---|---|

| AI Workflow Audit | Teams that know AI matters but do not know where to start. | Find the workflows where AI can reduce manual work safely and measurably. |

| Custom AI Systems | Companies with unique data, rules, or approval flows. | Build AI around the way the business already works. |

| Document Intelligence | Legal, finance, operations, HR, and SaaS teams. | Extract, summarize, redact, translate, search, and approve documents faster. |

| AI Agents and Assistants | Teams with repetitive internal or customer-facing requests. | Create assistants that answer, route, and act under clear controls. |

| AI-Ready Web and Mobile Products | Founders, product teams, and enterprises. | Add AI features to real applications without weakening quality or release speed. |

| VOIP and Call Workflows | Support, sales, service, and operations teams. | Summarize calls, route follow-ups, and connect voice workflows to business systems. |

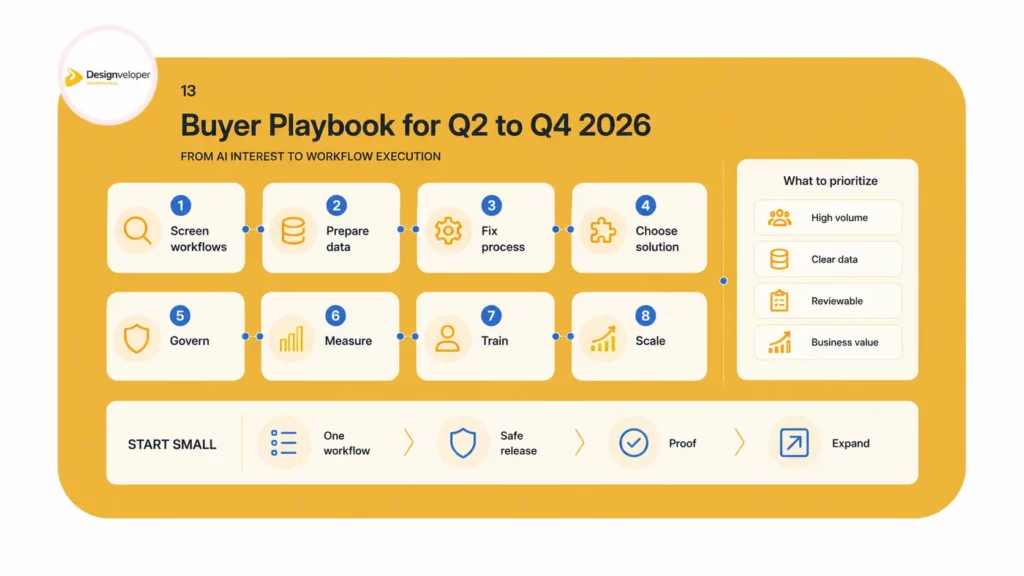

13. Buyer Playbook for Q2-Q4 2026

The final market conclusion is action-oriented. The rest of 2026 will favor buyers that move from broad AI interest to narrow workflow execution. The companies that win will not be the ones with the longest AI tool list. They will be the ones that select better use cases, prepare data, protect users, and ship improvements that employees and customers actually use.

A good Q2-Q4 roadmap starts with a portfolio screen. List the workflows that create delay, cost, or customer frustration. Score each workflow by volume, data access, risk, review need, and business value. Then choose one or two high-value workflows for a first production release. This creates focus.

The next step is data readiness. Many AI projects fail because the data is scattered, outdated, or inaccessible. Before building, teams should check data sources, permissions, formats, quality, and retention rules. They should also define what data the system should never touch. This reduces surprises later.

The third step is process design. AI should not automate confusion. If a workflow has unclear ownership, broken approvals, or inconsistent rules, the team should fix those before adding AI. AI can then reduce work instead of amplifying disorder.

The fourth step is solution design. Teams should decide whether they need a simple automation, a retrieval system, an AI assistant, a full agent, a human-in-the-loop workflow, or a custom application. Not every workflow needs agentic autonomy. Many workflows only need extraction, summarization, routing, and review.

The fifth step is governance. Define data classes, access roles, approval points, logs, evaluation tests, and escalation paths. This should happen before launch, not after the first incident. Governance helps adoption because employees trust systems that behave predictably.

The sixth step is measurement. Each project should have a small set of metrics. Examples include time saved, error rate, first response time, review time, ticket volume, release lead time, rework rate, and user satisfaction. Without metrics, teams cannot tell the difference between a useful system and a flashy tool.

The seventh step is training. Employees need to know how to use the system, when to trust it, when to correct it, and when to escalate. Training should include examples from their own workflow. Generic AI training rarely changes daily behavior.

The eighth step is scale. After the first release works, teams can expand to more documents, more departments, more languages, more integrations, or more automation. Scale should follow proof. It should not start as a broad rollout without feedback.

This playbook applies across industries. A finance company may begin with invoice extraction. A SaaS company may begin with support summaries. A logistics firm may begin with document intake. A healthcare company may begin with internal knowledge search. A retail team may begin with product content automation. The pattern stays the same. Start with the workflow. Build the system. Measure the result.

90-Day AI Workflow Action Plan

| Phase | Timeline | Main action | Output |

|---|---|---|---|

| Discover | Weeks 1-2 | Map workflows, pain points, data sources, risk, and user roles. | Prioritized use case list and success metric. |

| Design | Weeks 3-4 | Define target process, AI function, integration, review gates, and governance. | Solution blueprint and delivery backlog. |

| Build | Weeks 5-9 | Develop the workflow, connect data, build UI, add logging, and create evaluation tests. | Working pilot or first production release. |

| Adopt | Weeks 10-12 | Train users, monitor outputs, collect corrections, and measure impact. | Adoption report and scale decision. |

Common Mistakes to Avoid

- Starting with a model choice before defining the workflow.

- Treating AI output as final work instead of a draft or recommendation.

- Skipping data governance because the first release is called a pilot.

- Measuring activity, such as prompts used, instead of business outcomes.

- Removing junior work without replacing it with structured learning.

- Letting departments use separate AI tools without security visibility.

- Ignoring cloud cost until usage scales.

The buyer rule is simple. Start smaller than the vision, but design with production in mind. This is how teams avoid pilot fatigue and build systems that last.

14. Conclusion: The Practical Market Thesis

The Q1 2026 Tech Market Report ends with a clear thesis. The technology market has entered a production phase for AI. Spending is large. Cloud demand is strong. Developers use AI often. Workers face faster skill change. Vietnam shows strong digital and AI readiness. Yet the market is not simple. The value of AI depends on how well companies redesign work.

Designveloper’s view is grounded in software delivery. The next wave will not reward vague AI transformation. It will reward systems that reduce manual steps, improve accuracy, shorten handoffs, and help teams scale. That means document intelligence, AI assistants, HR automation, finance workflows, support automation, AI-ready web apps, mobile products, VOIP workflows, and secure cloud systems.

The strongest companies will not replace thinking with AI. They will move people toward better thinking. They will let AI draft, extract, summarize, route, and recommend. They will keep humans in control of judgment, architecture, security, product decisions, customer empathy, and final approval. This balance will define mature AI adoption in 2026.

Designveloper can help buyers make that shift. As an AI-first software and automation partner in Vietnam, Designveloper combines AI engineering, workflow design, and product delivery. Our experience across custom software, web development, mobile development, UI/UX, VOIP, and AI-powered business software gives us the right base for this market. We do not see AI as a detached tool. We see it as a layer inside real products and operations.

For teams planning the next move, the best starting point is an AI workflow audit. Choose one process. Map the bottleneck. Check the data. Define the metric. Build a safe first release. Then scale what works. That is how AI becomes business value. That is also the practical message of Designveloper’s Q1 2026 Tech Market Report.

Recommended CTA: Book an AI Workflow Audit with Designveloper to identify the safest and highest-value AI workflows for your team.

15. Source Appendix

16. Methodological Notes for Editors

This report uses a market-report style rather than a blog-only style. It still follows SEO readability principles. The introduction places the focus keyword early. The sections use clear headings. The paragraphs stay short. The claims use direct sources. The charts break up the text and help readers scan the argument.

The report also separates market data from Designveloper interpretation. Market data describes what a source reports. Interpretation explains what that data means for buyers, product teams, and technology leaders. This distinction protects the report from overclaiming. It also makes the Designveloper point of view stronger because it shows how the company reads evidence.

The report avoids a single-cause view of AI and employment. Some sources show strong wage and productivity signals. Other sources show early-career pressure. These findings can coexist. AI can raise the value of some workers and pressure others at the same time. A careful report should show both sides and then provide practical guidance.

The report also avoids treating Vietnam only as a low-cost outsourcing market. The 2026 opportunity is broader. Vietnam has domestic digital adoption, AI readiness, global engineering demand, foreign R&D investment, and a maturing software ecosystem. That supports a stronger story around AI-first delivery, product engineering, and workflow automation.

For future updates, editors should refresh several figures every quarter. These include Gartner IT spending forecasts, cloud infrastructure revenue, AI spending forecasts, developer AI adoption surveys, Vietnam digital economy data, and labor market indicators. The article can remain evergreen if it updates numbers and keeps the core workflow thesis stable.

Editors should also add internal links to relevant Designveloper pages when publishing on the website. Useful internal link targets include AI development services, custom software development, web app development, mobile app development, VOIP app development, AI workflow articles, RAG articles, AI agents articles, HR automation articles, and software development cost or process guides. Links should support reader intent and should not repeat the same URL.

The strongest visual update would be a dedicated featured image. It should use the focus keyword or a concise title. It should avoid too much text. It should show AI, cloud, software delivery, and jobs as connected market forces. The report charts already support the body content. A website version could place the first chart within the first quarter of the article to support scroll depth and user comprehension.

Future versions can also add original survey data from Designveloper clients or prospects. Even small pulse surveys can strengthen the report if the questions are specific. Examples include AI workflow priority, biggest AI adoption barrier, most requested integration, cloud cost concern, developer tool policy, and internal AI governance status. First-party data would make future editions more unique.

The report should keep Designveloper’s voice practical. It should sound like a builder that understands technology and operations. It should not sound like a financial analyst copying market headlines. The best sentences connect a market signal to a delivery decision. That is the brand advantage.

The report can support sales conversations. A sales team can use the dashboard, workflow map, governance checklist, and 90-day action plan to start discovery. These sections show that Designveloper can help a buyer make decisions before writing code. That builds trust and moves the company away from commodity outsourcing.

17. Glossary

| Term | Simple meaning in this report |

|---|---|

| Agentic AI | AI systems that can plan steps, use tools, and act with some autonomy under defined rules. |

| RAG | Retrieval-augmented generation. A method that lets AI answer from selected documents or databases. |

| AI workflow automation | The use of AI plus software rules to reduce manual work inside a defined process. |

| Document intelligence | Software that can read, classify, search, summarize, extract, or redact documents. |

| Human-in-the-loop | A design where humans review, approve, or correct AI output before it creates impact. |

| Model routing | A system that sends different tasks to different AI models based on cost, risk, or quality needs. |

| FinOps | A practice that manages cloud spending through visibility, accountability, and optimization. |

| AI governance | Policies, controls, roles, logs, and tests that make AI use safer and more accountable. |

| Neocloud | A specialized cloud provider focused on AI workloads and high-performance compute. |

| Product and platform model | An operating model where cross-functional teams own reusable products or platforms. |