AI agents are hitting many headlines with their powerful capabilities. They can autonomously interpret our requests, think about a solution, act on that, review their output, and improve over time. To build these smart agents effectively, you need a specific AI agent architecture. In this article, we’ll elaborate on what the architecture is (with diagrams), why it’s important, and how you can build the right one for specific use cases. Let’s begin!

What Is AI Agent Architecture?

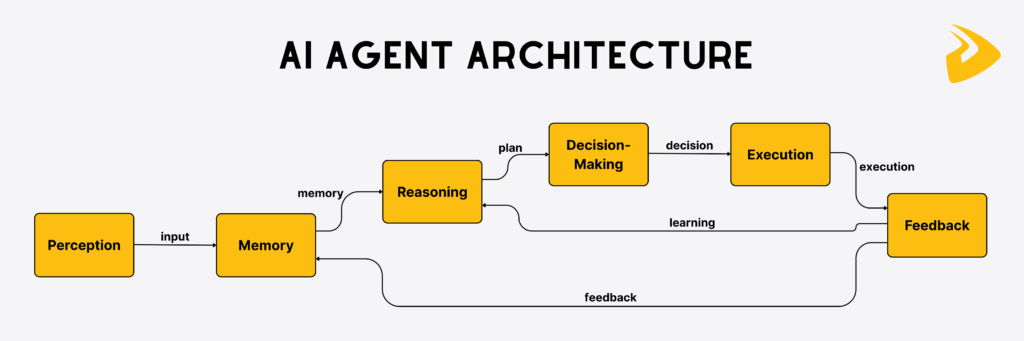

An AI agent architecture is the fundamental framework that instructs how an AI agent is built and performs a task. This blueprint shapes how the agent perceives its surroundings, reasons, decides what to do, takes action, learns, and improves its behavior over time. The framework is modular, guiding the agent to execute tasks in a structured and organized manner. Some architectures adopt memory-based systems that help the agent recall past context and experiences to identify the best action. They also enable tool use to complete given tasks (e.g., web search, financial analysis, or follow-up email management) more effectively.

Why AI Agent Architecture Matters?

AI agent architecture matters because it tells exactly what an AI agent can or cannot do. It sets the rules to shape the agent’s behavior, from perceiving inputs to taking actions. Without a well-designed architecture, the agent may perform slowly or unpredictably, leading to unreliable or inefficient results (e.g., bias, hallucinations, or harmful actions).

Further, when tasks become more complex, the architecture decides how the agent can scale smoothly, adapt to new goals, and use additional tools without compromising its performance. Also, good architecture ensures that the AI agent handles data, reasons, and acts in real time to avoid compute waste. This is very useful for applications like robotics, autonomous vehicles, or financial trading.

If your business uses multi-agent systems, a good architecture keeps agents working together, sharing data, and integrating with existing software without breaking workflows.

Core Components of AI Agent Architecture

A typical architecture for an AI agent, despite digital or physical, covers five core layers as follows:

1. Perception

Perception is the starting point of an AI agent architecture. It shows how the agent perceives or collects raw data from its environment. Without this layer, the agent would be blind or deaf, unable to communicate well with its surroundings.

Perception can happen in different forms, depending on agent types. For digital agents, input may involve text queries, logs from monitoring systems, or structured data from APIs. Meanwhile, physical AI agents, like robots, autonomous vehicles, or drones, receive sensor data as input through devices attached (e.g., cameras, microphones, or temperature detectors).

Once the agent has received the raw input, it’s converted into a standard format that the agent can interpret and process. For instance, a chatbot transforms a natural language query into tokens for analysis, while a self-driving car analyzes camera images to identify objects like traffic lights or pedestrians.

2. Memory

Memory enables AI agents to recall previous exchanges across interactions. Without memory, the agents cannot be adaptive to context and fail to generate human-like, personalized answers over time.

There are two types of memory. Short-term memory helps the agent keep track of immediate tasks or conversations. For example, a customer service chatbot remembers what was exchanged earlier in the same chat session. Long-term memory allows the agent to retrieve session history, user preferences, and other knowledge to keep conversations smooth across sessions. When you end a session and start a new chat, the agent with long-term memory still ‘remembers’ what was said.

Similarly, physical AI agents also have such memory, often known as operational memory and persistent memory. For example, a robot vacuum with working (short-term) memory can remember which parts of a room it has already cleaned during its current operation. Meanwhile, persistent memory enables a warehouse robot to map the layout of shelves and common item locations.

Modern AI agents often use vector stores (e.g., FAISS, Pinecone, Milvus, or Weaviate) to store embeddings of multimodal data. This allows them to search for relevant data even if the input isn’t phrased the same way (“semantic search”).

3. Reasoning & Decision-Making

This is the core intelligence layer of an AI agent. Once the agent has received and processed the data, it must reason and decide what to do next. Traditional AI agents may rely on rule-based logic or symbolic reasoning (e.g., “if X, then Y”). Meanwhile, modern agents employ machine learning or large language models to interpret context logically and generate responses.

For instance, when a customer support chatbot receives a question, it’ll decide whether to answer directly, pull from a knowledge base, or escalate to a human representative. Similarly, if a robot detects an obstacle on its way, it’ll reason whether to stop, turn, or change its path.

4. Action & Execution

Upon reasoning, the AI agent needs to turn decisions into actions. This is when the architecture shows how the agent interacts with the outside world. In particular, digital agents may call APIs, run scripts, generate text, or control other software. For instance, a chatbot answers a question, assigns a task, or books a meeting. Physical agents, on the other hand, leverage actuators to control sensor cameras, arms, or wheels to execute physical tasks. For example, a robot vacuum decides a room is dirty and physically moves to clean it.

5. Feedback Loop

The final layer is a feedback loop, but not all AI agent architectures have this process since some are only reactive. It reflects how the AI agent can review its performance, learn from results, update its memory with the outcomes, and improve for future actions.

There are various approaches for the agent to get feedback:

- Supervised learning – Update models based on labeled training data.

- Reinforcement learning – Reward successful decisions and penalize failures.

- Human-in-the-loop – Involve human experts to evaluate and guide the agent’s outputs.

- Self-critique – The agent self-assesses its own decisions and actions by using outcome comparisons, reasoning traces, or internal consistency checks.

For instance, a Q&A chatbot adapts its answers based on whether users find them useful. Meanwhile, a self-driving vehicle also refines its driving strategy after processing edge cases in real traffic and learning from the outcomes. With the feedback loop, your AI agent can evolve with data, context, and usage.

Popular AI Agent Architectures (With Diagrams)

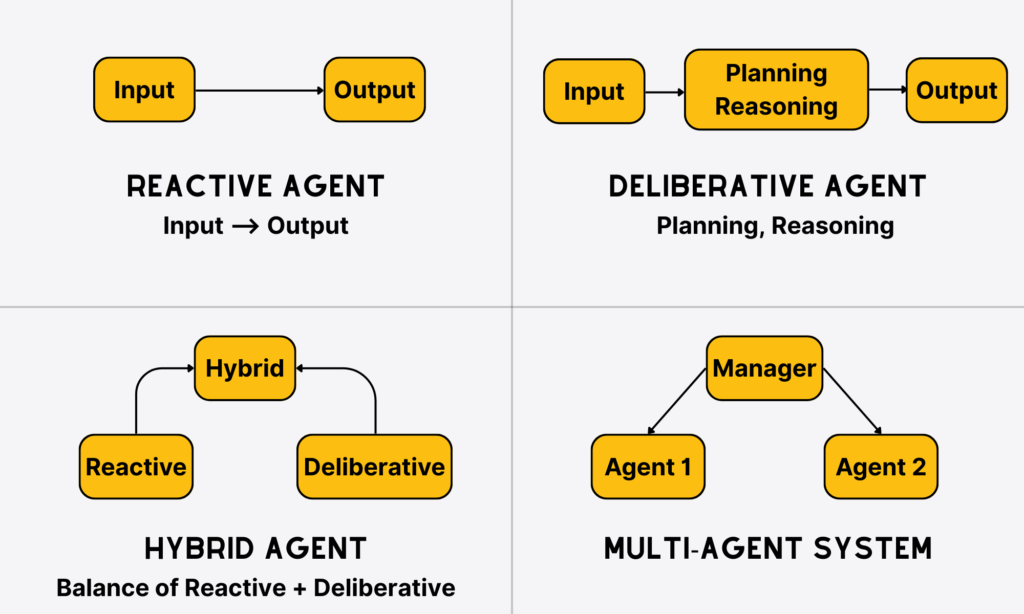

You’ve understood the core components of a typical AI agent architecture. However, not all the architectures cover all those factors. Depending on their ultimate goals, different architectures may work differently. So, in this section, we’ll elaborate on common architectures for AI agents:

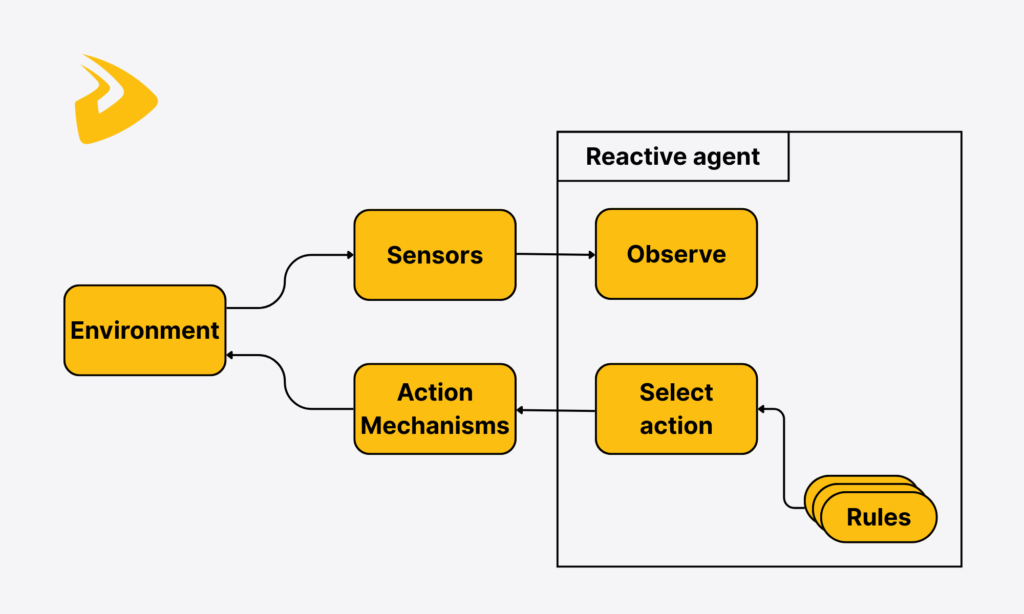

Reactive Agents

This is the most fundamental type of AI agent. They don’t have memory, think ahead, or try to understand their surroundings. What reactive agents need to do is to only react to the raw input given at the moment, without further thinking. Think of them like reflexes in humans. When you touch something hot, your hand pulls back immediately without the need for your brain to reason. Reactive agents are quick and simple, but not flexible and intelligent.

How they work: Reactive agents work by taking input directly from the environment. They then use predefined rules or mappings to generate immediate actions without considering past experiences or reasoning through the current situation. For example, if a factory robot detects an obstacle, it immediately stops working.

When to use: With their capabilities, reactive agents prove useful in:

- Repetitive and predictable tasks that don’t require further planning or complex reasoning.

- Environments where speed is more crucial than intelligence.

- Devices with low compute power.

Best use cases: Spam filters, thermostats, assembly line robots, NPCs in simple video games.

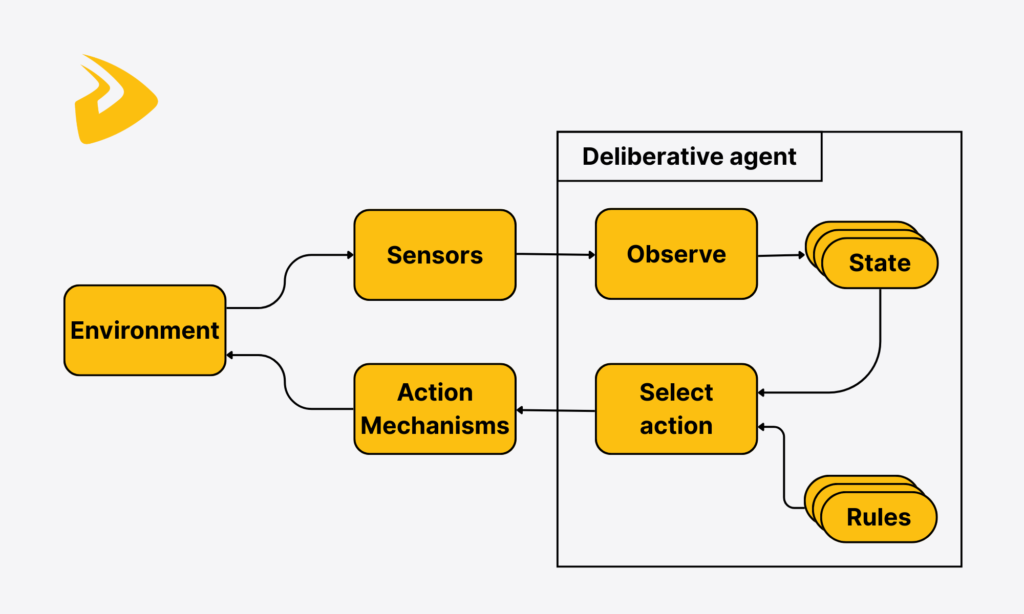

Deliberative Agents

These are thinking agents. They try to understand the input or surroundings, perform planning, and reason about future outputs before taking actions. Deliberative agents are intelligent and strategic, but perform more slowly and require large computing power.

How they work: Deliberative agents receive input and analyze the environment. They then use reasoning, symbolic AI, search trees, or planning algorithms to decide the best action before generating the output. For instance, a robot may map its environment, simulate all possible paths, and select the most optimal one.

When to use: Deliberative agents prove helpful in:

- Tasks that require strategic decision-making or logical explanations for actions.

- Complex environments where planning matters.

Best use cases: Medical diagnostic AI that reasons with patient records, route planning in GPT systems, and robotic tasks.

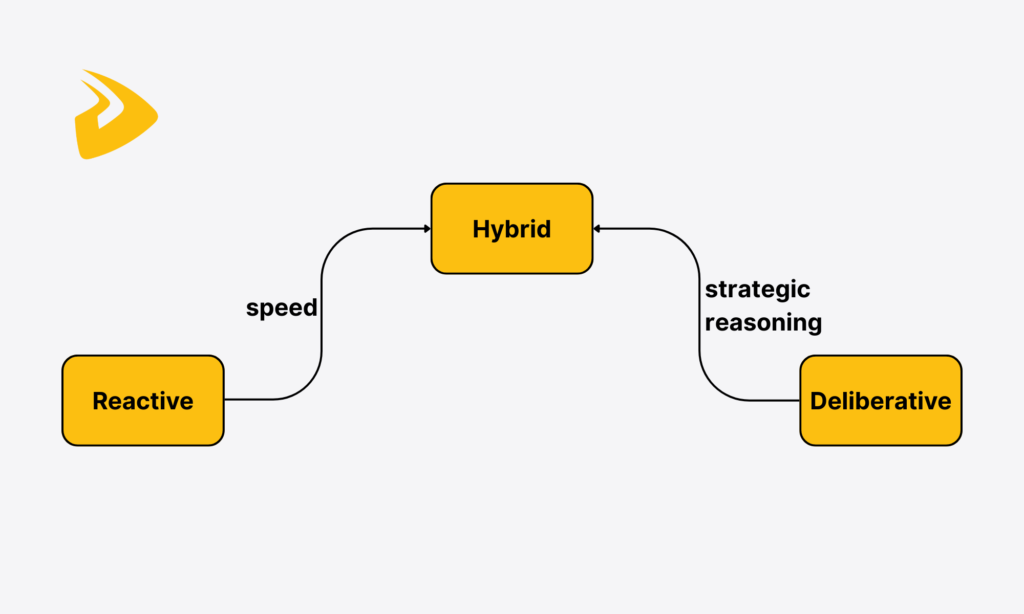

Hybrid Agents

Hybrid agents combine the strengths of reactive and deliberative agents, which are speed and strategic reasoning. This layered combination allows hybrid agents to respond rapidly but still can plan ahead. However, hybrid agents may be complex to develop and compute.

How they work: At the reactive layer, hybrid agents will process the input immediately and take instant actions. Then, the deliberative layer enables them to execute long-term planning. For example, a virtual task can immediately react to a user’s simple greetings and schedule sophisticated tasks.

When to use: Hybrid agents prove useful in complex environments requiring quick responses and long-term strategies.

Best use cases: Autonomous vehicles, industrial robots, or virtual assistants.

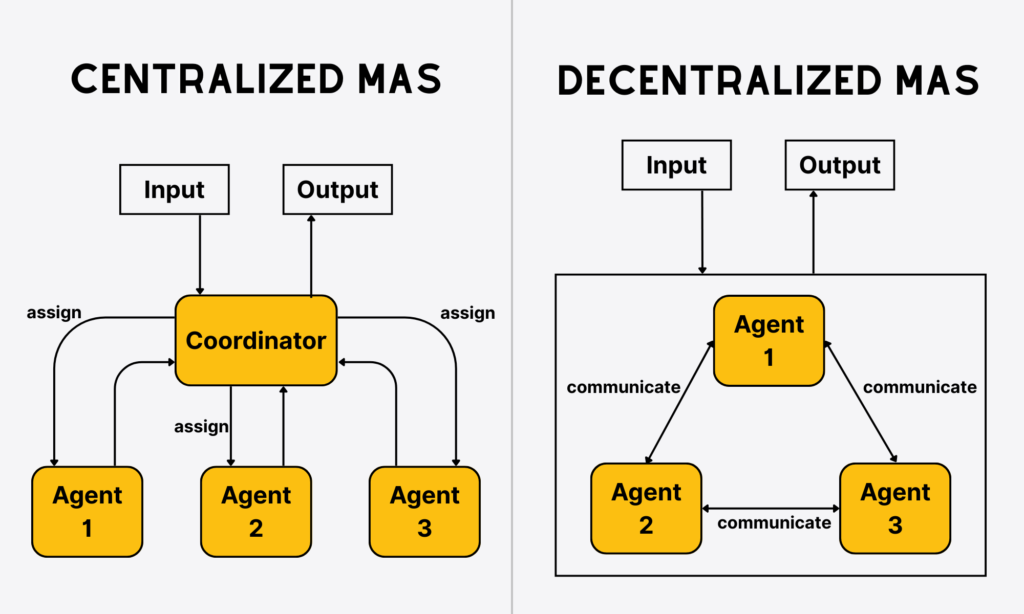

Multi-Agent Systems (MAS)

MAS gathers various AI agents to work together. Each agent can be reactive, deliberative, or hybrid. They collaborate with each other to achieve individual or shared goals. Multi-agent systems are scalable and cooperative, but possibly challenging to coordinate and manage.

How they work: In multi-agent systems, AI agents work independently but also communicate with each other to complete a task. How they coordinate depends largely on the system’s design.

- For centralized coordination, there’s a special agent (“coordinator”) assigning tasks, monitoring conflicts, and gathering results. For example, in a ride-sharing app, a central server (a manager agent) will assign drivers to customers.

- For decentralized coordination, all agents work in a leaderless team. They exchange data, negotiate, or follow simple rules to achieve a collective outcome. For instance, drones in a swarm use simple rules to fly in a formation, without a leader drone.

When to use: MAS works best in:

- Large-scale, distributed tasks that go beyond the capability of a single agent.

- Situations in which flexibility and scalability matter.

Best use cases: Smart grids (agents control electricity distribution), supply chain logistics (agents for suppliers, transportation fleets, warehouses), traffic management systems.

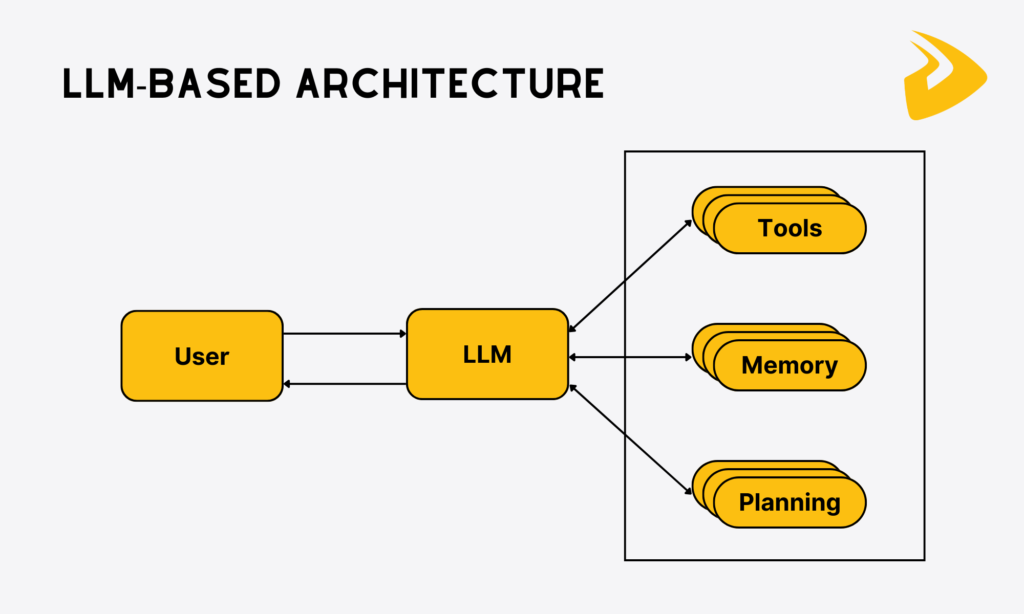

The Rise of LLM Agent Architectures

The introduction of large language models (LLMs) has changed the way agents work. Before LLMs, agents had limited capabilities. They followed fixed rules with limited tool use and memory scopes to complete complex tasks that require reasoning.

But LLMs like GPT, Llama, or Claude enhance natural language understanding and allow the agents to understand even unclear instructions, fine-tune behavior mid-conversation, interact naturally, and perform tasks in order dynamically. As LLMs have increasingly developed (with a CAGR of 20.57% in the next 5 years), LLM agent architectures will gain traction accordingly.

How they work: LLM-powered agents will receive natural language input, reason, and use memory or tools (if needed) to generate appropriate outputs. They can even integrate databases, vector stores, or APIs to improve intelligence.

When to use: LLM agents work best for knowledge-intensive tasks that require reasoning, human-like communication, and adaptation to evolving changes.

Best use cases: Customer service chatbots, AI coding assistants, research assistants, or complex workflows (multi-step reasoning or retrieval-augmented generation).

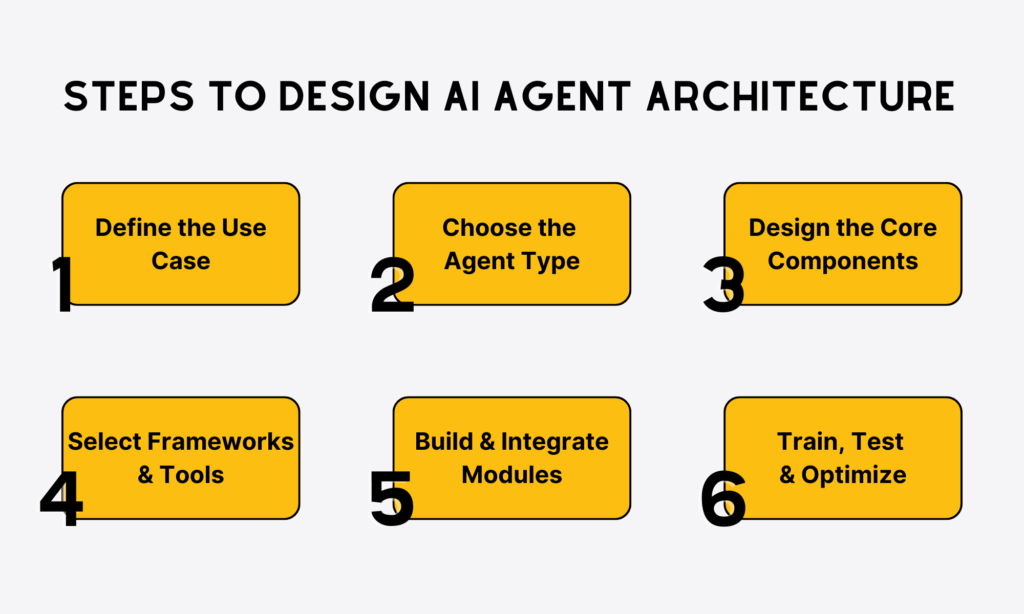

Step-by-Step Guide to Designing an AI Agent Architecture Diagram

You’ve seen different AI agent architectures and how they work. If you want to build an effective architecture for your AI agent, follow this guide:

Step 1. Define the Use Case

You cannot start designing an agent architecture without considering your existing problem that requires AI to address. So, the first and crucial step is to identify specific use cases.

Ask yourself (and may consult advice from relevant stakeholders like senior management): What does my business want the AI agent to achieve? It may be answering FAQs for customer support, identifying user intent, or escalating complex queries to the right reps. Or your business may want a trading agent to analyze market data, forecast trends, and execute automated trades.

Clarifying use cases helps you avoid designing over-complex or unclear diagrams.

Step 2. Choose the Agent Type

Based on your identified problems or use cases, you can decide the right agent type and architecture. If your use cases require quick responses to inputs without involvement of past interactions or reasoning, reactive agents work best. In case your agent works toward specific goals by using reasoning (e.g., a delivery drone identifying the best path to drop off packages), opt for goal-based agents. Meanwhile, learning agents are more useful if you want the assistants to learn from outcomes and improve over time. Selecting the right agent type gives your business the right logic and complexity to address existing problems.

Step 3. Design the Core Components

Next, you add the core components to the diagram based on the agent type you chose. These components can vary, but often cover the following crucial building blocks:

- Perception: Gathers data from the environment, like user inputs, sensors, or APIs.

- Reasoning: Handles the raw data and makes the right decisions (e.g., planning or logic).

- Memory: Stores past knowledge for future use (short-term context or long-term database).

- Action: Generate outputs, like sending responses or triggering certain workflows.

Regardless of your chosen components, your architecture diagram should clarify how these components connect and exchange information to reach the shared goals.

Step 4. Select Frameworks and Tools

Once you’ve selected the essential components for the AI agent architecture, opt for the suitable set of tools and frameworks to turn your paper blueprint into a working solution. Below are several popular tools you may consider to build a working AI agent:

- LangChain – An open, composable framework helping you build and connect various components in a RAG system.

- AutoGen – An open-source framework by Microsoft to build and orchestrate multi-agent systems.

- CrewAI – An open, Python-based framework orchestrating various autonomous AI agents to work together and implement complex tasks.

- Ray – An open-source framework that offers a distributed computing layer to scale AI and Python-based agents.

Choosing the right tech stack helps your team develop an effective AI agent that works seamlessly toward the defined goals.

Step 5. Build and Integrate Modules

Now that you have chosen the right tools, it’s time to develop modules and map out how they connect inside the architecture. Particularly, perception modules involve sensors, text parsers, and APIs, while reasoning modules cover LLMs, planning algorithms, and logic engines. Besides, action modules play a vital role in sending messages, controlling actuators, and running workflows.

Use arrows and blocks to make your diagram cleaner and easier to understand. This design indicates how data flows between modules, helping your development and even non-technical stakeholders understand integration points between modules before coding.

Step 6. Train, Test, and Optimize

The final step involves training, testing, and optimizing your agent’s performance. Accordingly, you can use simulation environments (e.g., dialogue sandboxes or trading simulators) to test how the agent makes decisions without causing real-life risks. Further, adopt performance metrics (e.g., accuracy, latency, or goal completion rate) to measure the agent’s operation. You can also continuously optimize the agent by fine-tuning parameters, adjusting workflows, or retraining models. All these measures ensure your architecture is both functional and reliable in real-world cases.

Best Practices for Building an Effective AI Agent Architecture Diagram

In addition to the step-by-step guide we gave, there are several best practices to develop an effective AI agent architecture.

Modular design for flexibility

With a modular design, the AI agent architecture can be divided into separate, reusable components, including perception, reasoning, memory, and action. Not all agent architectures require all these components. For example, a reactive agent doesn’t need reasoning and memory layers in its architecture diagram. Therefore, using a modular system helps you add or remove modules (e.g., vision processing or NLP) easily to clarify how they perform certain functions and interact with others. This is very useful for future scalability and maintenance, without disrupting or redesigning the entire architecture.

Continuous learning with feedback loops

Feedback loops enable the AI agent to make progress over time. By learning from past actions, mistakes, or successes, the agent can reflect on its performance and improve its future decisions easily. This allows AI to adapt to real-world, dynamic situations rather than only staying static and encountering repeated failures. Additionally, the agent can update its knowledge and strategy semi-automatically based on feedback. This removes the need to manually retrain models.

Monitoring and evaluation metrics

Choose the right metrics to assess and track the agent’s performance. These metrics vary depending on your specific use cases and expectations toward the agent. But some common indicators for most cases include accuracy, response time, user satisfaction, or task success rate. Besides, you can consider creating dashboards or logging systems to monitor how the agent behaves over time.

These measures help your team identify issues early and ensure the agent operates within business-relevant, safe, and compliant scopes. This is very important if your AI agent works in highly regulated sectors like healthcare, law, or finance.

Human-in-the-loop for critical decisions

AI is not perfect, and has not reached human level, especially in high-stakes situations. Its outputs may not be reliable or convincing for the final decisions. Therefore, human review or approval is still necessary. For instance, a medical agent can recommend possible treatments, but a doctor must verify these solutions to avoid serious mistakes. With humans in the critical decision loop, AI-agented outputs will be more reliable and useful, increasing user trust in AI systems.

Challenges in AI Agent Architecture

AI agent architectures, albeit useful, still come with several limitations. Each of these challenges requires specific attention when you design, deploy, and maintain smart agents:

Data quality issues

AI agents depend heavily on their training data and the real-time information they process. The quality of such data significantly impacts AI-generated outputs. For example, if a healthcare chatbot is trained on biased, incomplete, and old data, it can fail to identify symptoms in underrepresented groups, make inaccurate suggestions, and even provide harmful advice. That’s why agents need strong data pipelines, preprocessing modules, and robust mechanisms to filter or verify input data to ensure its quality.

Latency & scaling

Latency means delays in response times, while scaling refers to processing a growing number of tasks or users at the same time. These problems may impact an agent’s performance in real-world situations. For example, if a trading agent responds late to a trading signal, it may miss a market opportunity to finalize a good deal. To avoid this problem, you need to develop efficient pipelines, leverage parallel processing frameworks (e.g., Ray or Dask), and deploy AI agents on scalable infrastructure (e.g., edge computing or cloud servers).

Security & privacy concerns

Agents may risk breaching or misusing sensitive data (e.g., private chats or medical records) if not correctly secured. Further, attackers can exploit any vulnerabilities in insecure communication between agents to steal user data or inject harmful prompts.

For this reason, your team should develop AI agents with privacy and security in mind. Also, consider security best practices, like robust encryption, access control, and secure APIs to ensure compliance with regulations (e.g., GDPR or HIPAA).

Ethical decision-making

AI agents sometimes can encounter ethically complex situations, like a self-driving car deciding how to behave in a crash situation. Without ethical frameworks, agents can make unfair, biased, or harmful decisions that significantly reduce user safety and public trust. For this reason, besides privacy and security, ethical considerations should be built by default. They should include explainable reasoning, a human-in-the-loop oversight for sensitive tasks, and bias detection tools.

Real-World Applications of AI Agents

So with different AI agent architectures, what can you build for real-world situations? Let’s dive into the four common applications of AI agents in this section.

Conversational AI and customer service

Customer support chatbots are developed to communicate with people using text-based and voice messages. They use natural language processing (NLP) and large language models (LLMs) to understand customer queries easily, extract relevant knowledge, and build human-like responses. These AI agents help humans handle repetitive customer inquiries around the clock, enhance response times, and reduce call center costs.

Robotics and autonomous vehicles

These physical AI agents combine sensors (cameras, LIDAR, GPS), reasoning systems (decision-making, path planning), and actuators (robotic arms, motors). They implement too dangerous, repetitive, or complex tasks to ensure human safety and increase operational efficiency. Some common examples of these physical AI agents include self-driving cars from Tesla or Google, or warehouse robots like Amazon Robotics.

Healthcare and diagnostics

AI agents support doctors in diagnoses, treatment planning, and remote patient monitoring. They combine reasoning or predictive modeling and massive volumes of data (medical history, imaging, lab tests, research findings) to reduce diagnostic errors, accelerate treatment, and customize healthcare. Some common use cases include AI radiology assistants to detect turmoil, virtual health assistants, or drug discovery agents.

Financial trading and fraud detection

AI agents process real-time financial data, forecast market trends, automate trades, and discover suspicious activities. They can act 24/7 and identify patterns invisible to humans, allowing them to seize market opportunities and prevent fraud at scale. Some popular AI agent applications include algorithmic trading bots, fraud detection in banking transactions, and credit risk assessment systems.

Conclusion

An AI agent architecture plays a crucial role in shaping how the agent should behave to handle specific use cases, whether answering common questions, triggering workflows, or moving a robot’s arm. Each architecture includes different components, depending on what problems you want the AI to address. So if you plan to build an effective AI agent for your business, consider your use case first. This helps you identify the right agent type and tech stacks to realize your AI idea. In case you’re looking for expert help in the AI journey, Designveloper is a perfect partner to cooperate with.

As Vietnam’s leading software and AI development company, we stand out with a proven track record across sectors (like healthcare, finance, or e-commerce), mastery of cutting-edge technologies (e.g., LangChain, AutoGen, or CrewAI), and excellent communication.

One of our notable projects is Sensiotec, the Virtual Medical Assistant®. It measures heart and respiration rate and movement, as well as provides important “spot” and “trend” data to the monitoring devices of nursing staff without the need for electrodes to touch the patient or pads that contact another surface.

We also successfully deployed a product catalog-based advisory chatbot built inside the client’s website. This chatbot sends specific product information to customers, suggests personalized products based on user needs, and automatically sends confirmation emails to users.

Our solutions help clients increase organic traffic and attract more potential customers. We also receive high appreciation for our dedicated collaboration and excellent quality. With Agile frameworks, we’re committed to delivering your desired solutions on schedule and within budget. Talk with us and realize your AI idea!

Your writing has a way of resonating with me on a deep level. I appreciate the honesty and authenticity you bring to every post. Thank you for sharing your journey with us.

Your blog is a treasure trove of knowledge! I’m constantly amazed by the depth of your insights and the clarity of your writing. Keep up the phenomenal work!

Your blog is a beacon of light in the often murky waters of online content. Your thoughtful analysis and insightful commentary never fail to leave a lasting impression. Keep up the amazing work!

Hello my loved one I want to say that this post is amazing great written and include almost all significant infos I would like to look extra posts like this

Your blog is a breath of fresh air in the often mundane world of online content. Your unique perspective and engaging writing style never fail to leave a lasting impression. Thank you for sharing your insights with us.

Your blog is a constant source of inspiration for me. Your passion for your subject matter is palpable, and it’s clear that you pour your heart and soul into every post. Keep up the incredible work!

I’ve been following your blog for some time now, and I’m consistently blown away by the quality of your content. Your ability to tackle complex topics with ease is truly admirable.