Given how many AI projects fail between pilot and production, it seems there must be a common flaw in the rollout process. To resolve this, CIOs are looking to redesign how organizations test, fail, and operationalize technology. Those are not easy tasks, but it helps to understand which part of the system broke first.

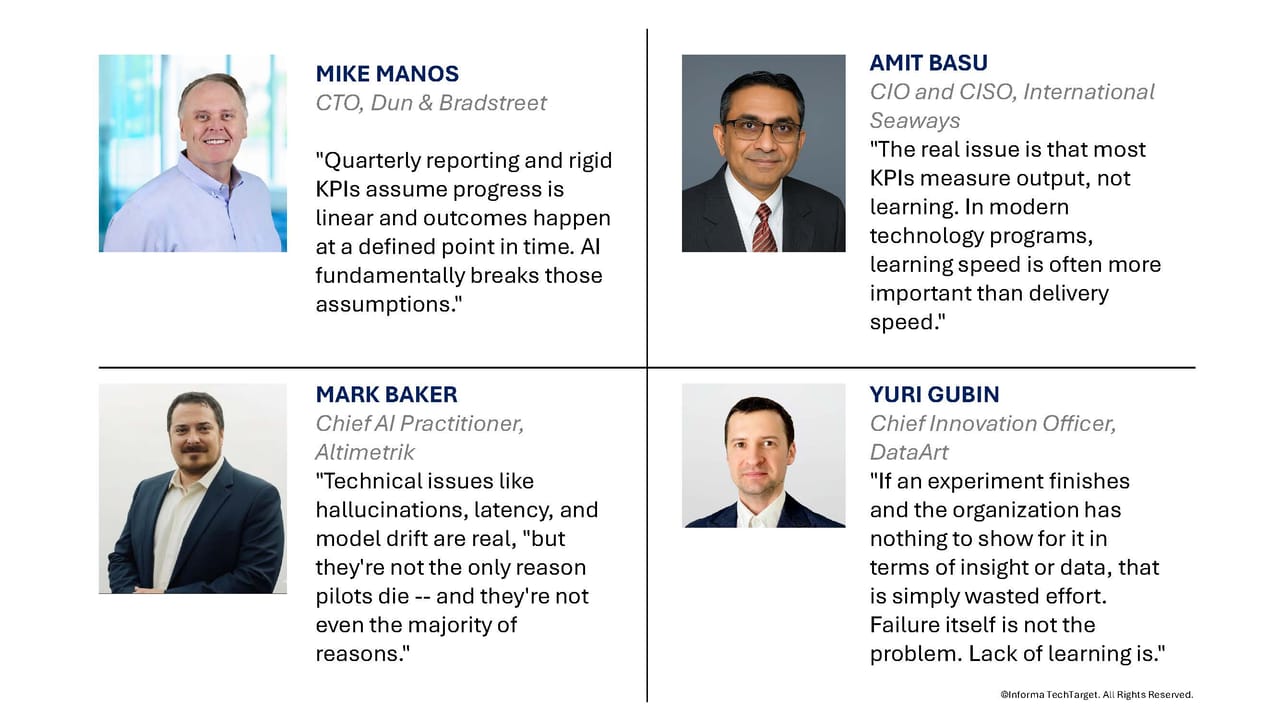

“Quarterly reporting and rigid KPIs assume progress is linear and outcomes happen at a defined point in time. AI fundamentally breaks those assumptions,” Mike Manos, CTO at Dun & Bradstreet, said.

In short, linear expectations crash into loopy events, which puts a spin on reality that leaves everyone dizzy.

“Model performance can drift with data changes, user behavior, and policy updates, so a ‘set it and forget it’ KPI can reward the wrong thing, too late,” Manos said.

The penalty for CIOs, however, comes from the time lag between the misread KPI signal and the CIO’s moves to correct it. Timing is everything, and “by the time a quarterly metric flags a problem, the root cause has already compounded across workflows,” Manos said.

That means that while AI is fast, CIOs must act just as fast — or faster. The question: How can humans possibly move faster than AI and KPI-driven failures?

Success hinges on getting in sync with and ahead of AI instead of trying to outrun it.

“In an AI-accelerated loop, judgment has to be applied continuously as signals stream in,” Derek Perry, CTO of Sparq, a US-based software engineering consultancy, said.

That, or “embrace predictive analytics and diagnostic tools that provide real-time insights, allowing them to intervene before even minor issues escalate,” David Vidoni, CIO at Pegasystems, a global software company, said.

Rethinking the learning loop

Waiting until the end of a POC to figure out why a concept doesn’t scale is clearly too late, but neither is it prudent to abandon a “trial, observation, and refine” cycle entirely, Alex Tyrrell, head of advanced technologies at Wolters Kluwer and CTO at Wolters Kluwer Health, said.

Instead, Tyrrell argues for refining the interaction process itself to detect issues earlier in a safe setting, particularly in regulated, high-trust environments like healthcare.

He recommends pairing each iteration with both predictive and diagnostic signals, so IT teams can intervene before the error ripples down to the customer level.

Beyond technical challenges

Mark Baker, chief AI practitioner at Altimetrik, cautioned that proactive iteration refinement only addresses part of the problem. Technical issues like hallucinations, latency, and model drift are real, Baker said, but “they’re not the only reason pilots die — and they’re not even the majority of reasons.”

AI pilots fail for the same non-technical reasons that have always plagued technology performance, such as a governance vacuum, organizational unreadiness, low usage rates, or “measurement theater,” which is when tech performance can’t be tied to a specific business value, explained Baker.

“But the AI moment — when the pressure to move quickly is combined with unprecedented user leverage — makes everything more acute,” Baker said.

Another issue is that a pilot that generates valuable insight but no immediate ROI is often dismissed as a failure, Amit Basu, CIO and CISO at International Seaways, an owner and operator of crude, product, and chemical tankers around the world, said.

“This discourages risk-taking and leads teams to optimize for appearances rather than outcomes,” he said. “The real issue is that most KPIs measure output, not learning. In modern technology programs, learning speed is often more important than delivery speed.”

Even so, certain modern realities, from business as usual to standard CYA practice, often thwart efforts at refining or redesigning KPI templates, according to David Tyler, CEO of Outlier Technology Limited.

“Templated KPIs and widely adopted frameworks can quickly become a form of organizational religion. They offer certainty and safety, and, crucially, someone else to point at when things go wrong. ‘We followed the framework’ becomes a shield against accountability, even when judgment should have overridden process,” Tyler said.

‘Design for Failure’ vs. ‘Fail Fast’

Collecting metrics is a key part of assessing AI technology performance, but what you do with the measurements after you have them is another thing entirely. Typically, it boils down to a choice between “Design for Failure” versus “Fail Fast” approaches.

“Fail Fast prioritizes speed and learning via quick experimentation. It’s powerful in early testing but risky if carried unchanged into scaled environments,” explained Beth Weeks, EVP of development at Planview, a global enterprise software company.

On the other hand, “Design for Failure approaches are slower initially but build resilience, observability, and recovery into systems upfront, making it generally more sustainable,” Weeks said.

The question is whether either approach still works well for CIOs today.

Design for Failure is about anticipating breakdowns and building containment up front: scenario testing, controls, fallbacks, and auditability, according to Manos. The challenge is accurately anticipating how AI fails before it happens: “That makes designing for every scenario inherently incomplete and sometimes overly prescriptive.”

Fail Fast is about “moving through learning loops quickly to discover what you didn’t know,” Manos said, including surprising failure modes and unexpected outputs that create new insight and better criteria for the build. The risk is failing fast in operationally critical areas; CIOs still need guardrails, so failures are caught early and don’t become customer-facing or regulatory events, warned Manos.

“In practice, the best approach is controlled fail fast: iterate quickly, but within a trusted data boundary and monitored environment that can detect unintended results and course-correct immediately,” Manos said.

Methodologies

Smart CIOs now combine experimentation with instrumentation, according to Basu. “Instead of waiting for a pilot to fail, they design systems to surface early signals: performance drift, adoption friction, security weaknesses, or integration risk,” he said.

Basu noted three practices he finds to be especially effective:

-

Design for observability. Build pilots with deep telemetry from day one so failures are visible early, not after rollout.

-

Conduct pre-mortems and scenario testing. Before launching, ask “How could this fail?” and simulate those paths. This often prevents the failure entirely.

-

Deploy AI-assisted diagnostics. Use analytics and AI to detect anomalies in workflows, usage patterns, and system behavior before they become outages or abandoned projects.

“The goal is not to avoid failure, but to shorten the distance between signal and correction,” Basu said.

Good vs. bad failures

Even so, failures still happen. A failure caused by design or speed would seem to be a bad thing that necessitates corrective action. But the issue isn’t that clear cut. This is because a good failure produces learning, according to Yuri Gubin, chief innovation officer at DataArt, a global software engineering and consulting firm. He describes this as a scenario where people had time to work, progress was made, materials were delivered, and there is transparency into what worked and what did not.

“Results are documented, discussed, and shared. Those learnings then influence future decisions and designs,” Gubin said.

By comparison, a bad failure is “when something ends and no one knows why,” he explained. In other words, there is no clarity on what went wrong, what succeeded, or what could be reused.

“If an experiment finishes and the organization has nothing to show for it in terms of insight or data, that is simply wasted effort. Failure itself is not the problem. Lack of learning is,” Gubin said .

Basu has a checklist that might also be helpful in making the distinction between good and bad failures:

A good failure has three characteristics:

-

It happens early and cheaply.

-

It produces clear learning.

-

It improves the next decision.

A bad failure is notably different:

-

It happens late, in production, or in front of customers.

-

It repeats a known mistake.

-

It damages trust, safety, or compliance.

“In simple terms: Good failures improve the system. Bad failures expose weaknesses the organization already should have addressed,” Basu said.

By whatever method they use, strong CIOs build governance that encourages good failures and “systematically eliminates bad ones through controls, simulation, and early warning systems,” Basu said.

Get more AI coverage and insights direct to your inbox three times a week with the InformationWeek newsletter.