Like it or not, AI adoption is already underway. But the enterprise story isn’t about high-profile moonshots. It’s about AI capabilities being added to the systems companies already use.

Most enterprises are not building these AI tools from scratch. Instead, they turn to existing suppliers that are embedding AI into established platforms. Examples include:

-

Security vendors adding AI to enhance real-time threat analysis.

-

Enterprise applications adding scheduling optimization or natural language transcription.

-

Inventory management and loss prevention becoming more predictive and automated.

AI, in short, is entering the enterprise through upgrades to familiar software and services — not entirely new systems.

As these capabilities spread, however, they place new demands on enterprise infrastructure. While individual AI capabilities may be simple to onboard, enterprise-wide adoption can quickly become complicated. Some AI functions require low latency for fast response times. Others must prioritize reliability to ensure no data are lost. Enterprises must align network performance with the specific requirements of each AI function.

Enterprise AI is already ubiquitous

Before addressing network requirements, it’s useful to identify how and where enterprises are investing in AI technologies. To that end, Omdia conducted surveys, independently and in partnership with HPE Juniper Networking, of 733 large enterprise decision-makers worldwide, and conducted more than a dozen enterprise executive and service provider interviews, to understand how enterprise AI adoption is changing network needs.

As noted, most enterprises aren’t developing foundational AI models themselves. They’re relying on software vendors to integrate AI into the platforms that run their business.

Enterprise IT directors know that they must prove AI’s value to get buy-in from CIOs. As a result, their initial projects are pragmatic. IT departments keep close tabs on performance metrics: percent efficiency gains, reduced worker-hours spent on tasks, Euro or dollar savings or revenue increases. Omdia’s research reveals that IT and operations, finance, and customer service are three of the initial landing points for enterprises investing in AI.

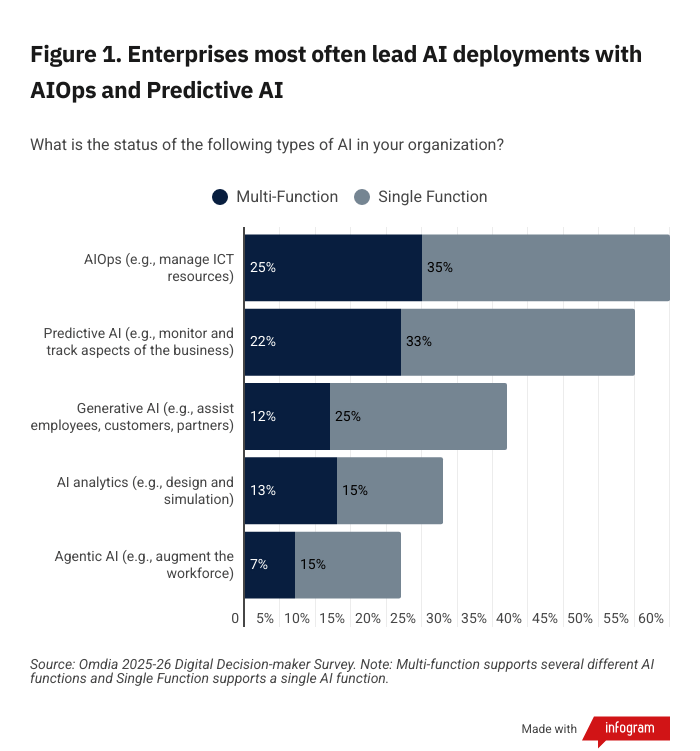

As shown in Figure 1, nearly 80% of large enterprises are active AI adopters today, meaning they have purchased or subscribed to, and frequently trained and customized, AI platforms and services. Even enterprises that don’t consider themselves active AI adopters use AI tools in some capacity. In fact, there’s no avoiding AI functionality embedded in SaaS sites, collaboration environments, retail commerce and search queries.

Networks must keep pace — at machine and human speed

Most AI traffic is generated by conventional applications upgrades, not revolutionary new uses, and much of the enterprise AI network traffic impact has been below the waterline. Enterprises that actively deploy AI record low single-digit changes to their volumes of network traffic on average. But they expect AI traffic to blow up, outpacing their total network traffic expansion by 4.5x-5x on average over the next three years.

Network performance is essential when AI becomes part of real-time or mission-critical tasks. AIOps is often time sensitive: Security, network and IT control require real-time analysis and response. AIOps uses small, tightly focused models for fast decision-making. AI analytics and agentic AI need availability and delivery guarantees, to make sure information and instructions aren’t dropped.

However, when AI interacts with people, it needs to move at a human pace. In collaboration settings, for example, meeting transcripts and summaries have no time constraint. But intelligent filters, captions or translation must operate in near-real time during a live session.

In human-AI interactions, the expectation for a voice/video conversation is generous – 1-2 seconds’ delay. On the other hand, latency above 50-100ms breaks the experience for interactive applications.

The network is often a small piece of the time budget compared to AI processing lag. But network availability, delivery and latency can be managed. It should never be the reason why a transaction fails or a user experiences poor performance.

Networks need to adapt to widespread training and customization

In addition to utilizing customizations for AI technologies based on network performance requirements, enterprises need AI customization specific to their industry. For example, aerospace components, automobile parts and collectible toy manufacturers each use entirely different machines, fabrication processes and supply chains. Each will have different terminology around its requirements and goals. Each might start with the same GenAI model but need a thin layer of customization.

It’s easier to build on top of pre-existing AI models — often provided by known suppliers — than start from scratch. AI providers offer different levels of privacy options to wall off customers’ proprietary information. Customized AI models need to upload and ingest extra training data. Enterprises that train AI estimate it takes several hundred Gigabytes of uploaded data on average.

Customization of AI models becomes more complex for enterprises operating globally, across multiple networks. Multinational enterprises can’t cover the world with just one AI instance — even if governance and compliance don’t come into play, backhauling network traffic around the world tanks performance. Enterprises load instances of their custom-trained AI model across countries and regions. Then they need to keep those AI models synchronized. They can do this by distributed inferencing — educating AI instances on common datasets. Enterprises that use distributed inferencing estimate this represents hundreds more Gigabytes of data uploaded each year.

And there’s even more: Enterprises need assurances that their AI models stay on mission. That calls for regular training refreshers. Enterprises on average re-train their models twice a year. This can generate hundreds more gigabytes of data uploaded a year on average.

Total network traffic generated by enterprise AI training and inferencing remains small. But it is poised for explosive growth, predicted to more than double each year (140% CAGR) for the foreseeable future. Enterprises are implementing more customized AI models, they are growing the amount of functionality and sophistication for each model, and they are increasing the number of instances they run. These factors collectively multiply the traffic load, and enterprises add interconnect capacity for faster, more reliable AI training, retraining, and distributed inferencing. Through 2030, Omdia forecasts more than 50x growth in AI operations and management traffic, and another 20x growth in the five years to 2035, rocketing from a tiny base to a measurable amount of total global traffic as AI becomes more connected.

Once again, video changes everything

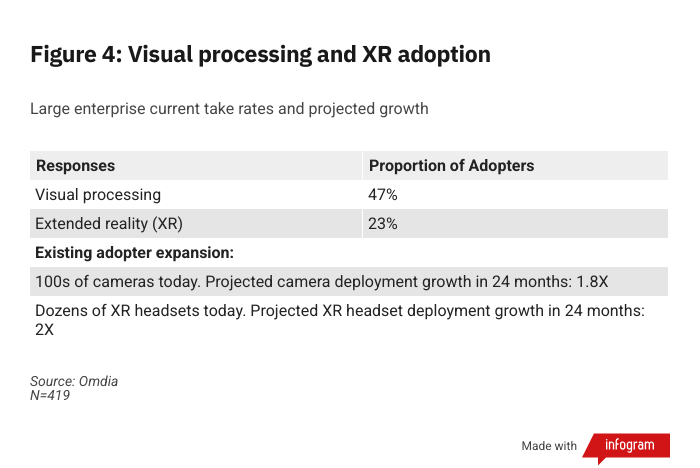

Our present-day pragmatic AI operates in virtual space. However, there is an exciting long-term future for immersive technologies and physical AI, and networks will need to evolve to support this AI transformation. For now, cameras bridge virtual space and physical AI. Today, about half (47%) of large enterprises use dedicated cameras and imaging devices with visual processing and cognitive analytics, according to Omdia’s 2025-26 Digital Decision-maker Survey. A typical large enterprise implementation has hundreds of cameras. They are cheap and ubiquitous, easy to set up, and versatile in what they can monitor and analyze.

AI visual processing makes object recognition easy. Add basic service logic and a controller, and there are endless opportunities for machine eyes to be trained for smart tasks: guarding building entrances, tracking store shelves, taking stock in equipment rooms, overseeing assembly lines and shop floors, monitoring highway safety, and securing conference centers and transport hubs.

Old school computer vision ran on site. Pre-processing on device and on site still makes sense, but there are reasons why AI processing in the cloud is better:

-

A vast library of objects and conditions. Hosted AI training is fast and cheap compared to conventional applications development.

-

Flexibility to add and adjust assigned tasks. AI may be taught to count widgets at first, then to recognize damaged widgets, then to detect environmental hazards, and later to correlate multiple video feeds for more complex analysis.

-

Aggregated analytics. Visual data can be collected and stored for trending analysis across views and locations to unlock insights and value.

-

Shared model learning. Inferencing across a large audience improves accuracy, efficiency, and richness of results over time.

AI for cameras has a massive impact on network traffic. A single moderate resolution (500 kB) image is the file size equivalent of more than 75,000 words. This is more than 750 average chat queries. If one high-volume industrial camera takes one image per second for a year, this converts into nearly 16 TB of generated image data annually.

As with other AI functionality, some camera-driven functions will be time-insensitive (e.g., warehouse inventory stock); some will need to operate at the pace of satisfactory human experiences (e.g., physical surveillance, biometrics); and some will need to respond in real time (e.g., alerts on a manufacturing floor).

For emerging XR applications, immersion demands imperceptible (sub-50ms) latency to provide satisfactory experiences. Here, too, local device/server pre-processing will need to mix with processing in the cloud. Omdia forecasts that over the next two years, adoption of AI for cameras and use of XR headsets will increase about two-fold.

Conclusion: What’s it all mean for the enterprise?

For enterprises, scaling the use of AI — both in the virtual and physical space — within their organizations will get complicated, fast. The average large enterprise AI adopter already has more than seven active AI functions and growing.

The management of front-end traffic (sites to AI) and back-end interconnect traffic (between AI instances) requires careful planning to ensure critical responses happen in real time, user experiences are satisfactory, and transactions are completed reliably.

High-visibility AI slop is a distraction. Expect quietly elegant AI uses to proliferate, and for video input to become part of the equation. Over the next few years, more AI-ingested media, functionality, and agentic AI interactions are going to make managing network and infrastructure performance messier. But on the bright side, there will be AI, too, just for that purpose of managing future needs.