Web data scraping has grown in significance, as it enables companies to obtain current information for market research, competitor analysis, and other purposes. One of the most widely used programming languages to make this web scraping process easier is Python. Why, then, should your company use this language to retrieve web data? How does web scraping in Python operate? Explore the answers for your Python web scraping projects by reading on!

What is Web Scraping and Why Is It Useful?

Web scraping is simply pulling data from websites to your existing software. The manual copying and pasting of website content is eliminated by this automated process. Rather, it enables you to access web pages, comprehend their structure, and extract the desired information by writing code in Python and other programming languages.

So, why is web scraping helpful? To understand the importance of web scraping in today’s data-driven world, let’s take a glance at interesting statistics from Bright Data:

- 69% of startups now use public web data as a primary source to gather real-time data. Meanwhile, 69% of enterprises use this data for real-time AI applications.

- 54% of respondents see a positive financial impact from web scraping.

These numbers somehow reflect how important web data is to businesses in all sectors. But learning and digesting it ourselves is hard. But this process becomes much easier with web scraping tools. Accordingly, marketing and sales teams employ web scrapers to monitor product prices on e-commerce websites or mentions and reviews on social media. Meanwhile, recruiters gather job listings from around websites, and researchers may scrape massive amounts of web data for their research purposes.

With web scraping, your business can save time and remove repetitive manual work in collecting web data. When used ethically and responsibly, this approach empowers your decision-making, increases competitive edge, and boosts innovation.

Why Use Python for Web Scraping?

Python is the top choice to extract web data, with 69.6%, as it combines simplicity with power. Below are several benefits Python offers to facilitate and accelerate the entire web scraping workflow:

Popular Libraries

Python’s ecosystem of well-maintained libraries helps simplify web data extraction.

- BeautifulSoup enables developers to parse HTML and XML documents and fetch specific elements (e.g., titles, links, or tables).

- Selenium is often used for JavaScript-based websites. By simulating real browser interactions, the tool can scrape interactive or dynamic content like humans do.

- Scrapy is a full-fledged framework for large-scale extraction. It has built-in tools to process requests, follow links, store data, and monitor concurrent crawls.

- Requests facilitate the process of sending HTTP requests and receiving responses from websites without handling complex networking code.

Advantages of Python’s Ecosystem

In addition to those libraries, other Python features enable you to effectively scrape the web. In particular, Matplotlib or Seaborn aid in turning the data into a comprehensible format after scraping, while pandas or NumPy aid in cleaning and processing the raw data. To further utilize the data gathered to inform AI models, Python also supports scikit-learn and TensorFlow, two machine learning libraries. Python makes it possible for even novices to learn about web scraping thanks to its vast tutorial library and developer community.

Scalability & Flexibility

Doesn’t really matter if you’re just poking around for a few headlines or trying to snag mountains of data—Python’s got your back. Seriously, slap together a few lines with BeautifulSoup and Requests, and boom, you’re in. Need advanced capabilities for more complex scraping tasks? Scrapy can handle all the messy stuff by juggling tons of requests at once, yanking data from all over, and even playing nice with cloud setups. And if you suddenly decide you want images, text, or whatever, Python’s got the tools for that too. Honestly, it’s kinda wild how far you can push it, no matter what you’re scraping or why.

Setting Up Your Environment

This chapter gets your mindset and your environment ready for working in Python to scrape data from the web. You need to install Python, along with the Python libraries you want, and an Integrated Development Environment (IDE). Running your code smoothly depends on your setup, so don’t miss this important step.

Install Python & pip

First things first, do you have Python 3 on your machine? Pop open your terminal and just type to check this:

python –version

If your computer says it’s not found, time to hit up python.org and snag the latest version. Don’t worry, the newer Python comes with pip baked in, so you don’t need to install this package manager separately.

Now, spin up a virtual environment. This helps you keep a cluttered mess of dependencies organized and lets them not be involved in other parts of your systems. Just run this in your terminal to run such a sandbox:

python -m venv scraping-envThen, activate the environment with the following commands:

For macOS/Linux:

source scraping-env/bin/activateFor Windows:

source scraping-env\Scripts\activateOnce the environment is activated, any libraries you install with pip will operate within this sandbox. This keeps your setup clean and conflict-free.

Install Required Libraries

Now that you have installed Python, install the libraries for web scraping with pip:

pip install requests pip install beautifulsoup4 pip install lxml pip install selenium pip install mechanicalsoup- requests – Send HTTP requests

- beautifulsoup4 – Parses and retrieves HTTP elements

- lxml – Executes high-performance parsing and XPath queries

- selenium – Scrapes dynamic websites that contain JavaScript-based content

- mechanicalsoup – Automates logins and form interactions

You don’t need to install all these libraries. Only choose what you prefer using. If you’re a web scraping newcomer, choose BeautifulSoup4 and Requests because they’re lightweight, easy to learn, and cover the most basic scraping needs.

Choose Your IDE or Notebook

Next, select a comfortable coding environment for your Python web scraping project. There are many choices, typically VS Code, PyCharm, and Jupyter Notebook. Each comes with its strength. But we advise you to choose Jupyter Notebook if you want to get used to Python web scraping. This environment allows beginners to run code step by step and instantly see the output. It’s great to test how to retrieve specific elements and experiment with your scraping logic.

When you’re ready to write full scripts or build a larger project, move to VS Code and PyCharm. VS Code is lightweight and flexible. It has extensions to help you debug and manage virtual environments easily. Meanwhile, PyCharm comes with strong project management, built-in debugging, and database integration. It works best for more complex scraping.

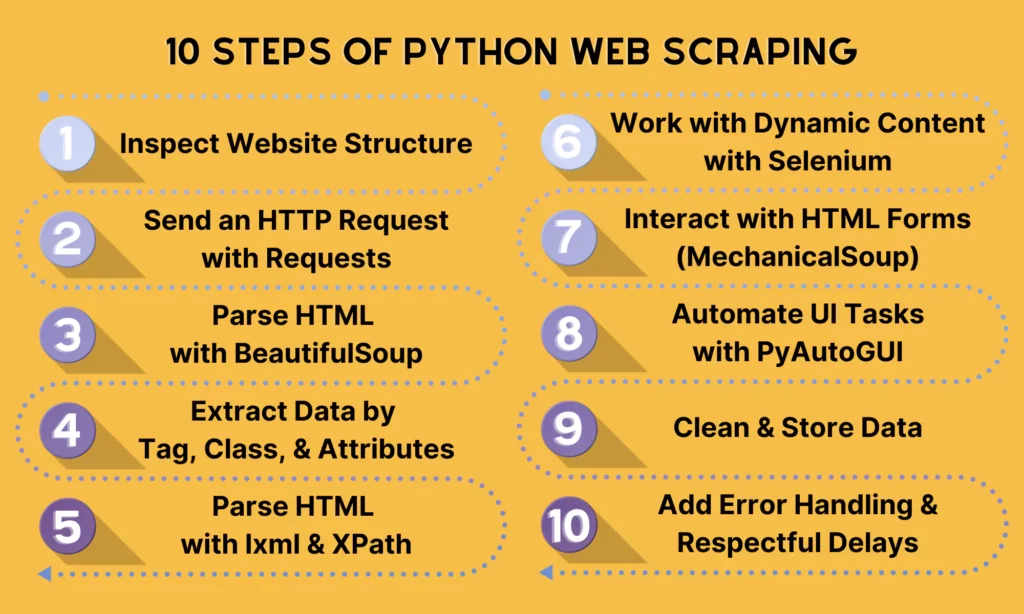

Step-by-Step Python Web Scraping Tutorial

Now that you have prepared an ideal development environment, let’s move on to a typical workflow of scraping a website with Python.

Step 1. Inspect the Website Structure

Before writing Python code, we need to inspect the website structure first by using Developer Tools. Just right-click the link and hit “Inspect” (on Chrome or Firefox—doesn’t really matter which). This pops open all the messy HTML code. Then, you can start poking around for stuff like <h1>, <p>, <div>, or <span>. Pay attention to things like class or id — it’s like the site’s way of tagging stuff for you.

Here are the usual suspects you’ll run into:

<h1>: This one’s the big headline like, “Welcome to My Blog!” kind of thing.<h2>through<h6>are just smaller headings. Think of them as subtitles or section headers.<p>: That’s your bread and butter for text—paragraphs, article bodies, all the wordy stuff.<a>: Links. Obvious but important.<div>: Basically a catch-all box. Sites use these everywhere to organize things, even if it looks like it doesn’t do anything.<span>: Smaller than a div, more like a sticky note for tiny bits—dates, prices, labels, whatever.<img>: Images, no surprise. It often comes with thesrcattribute to pinpoint the actual sources of the images.

Beyond these tags, you can note several key attributes as follows:

- class groups elements together. For instance, various headlines may share the same

class=”headline”. - id identifies one specific element on the page, such as

id=”main-title”. - href appears in

<a>tags to contain the URL. - src appears in

<img>or<script>tags to show the file location. - alt is the text description for images. This attribute is useful for accessibility and scraping image metadata.

In the example below, we extract the web data from the blog post, “What is an AI agent?” in LangChain’s blogs.

Step 2. Send an HTTP Request with Requests

Next, you’ll leverage the requests library to extract the raw HTML of the page. The following command helps you retrieve the page’s source code for later parsing.

import requestsurl = "https://blog.langchain.com/what-is-an-agent/"response = requests.get(url)requests.get(url)sends an HTTP GET request to the LangChain blog page.response.textreturns the HTML source code of the page as a string.[:500]prints the first 500 characters, so you don’t flood your terminal.

Tip: The server replies with the page content and a status code when the request.get() function sends an HTTP request to the webpage. You can safely work with the content if the status code is 200, which indicates that the request was successful. However, it indicates that something went wrong if the server returns 404 (Not Found) or 500 (Server Error). To check the status code, you can add the following command to the HTTP request:

if response.status_code == 200: print(response.text[:500]) # print the first 500 characters of the pageelse: print(f"Request failed with status code: {response.status_code}")Here’s our complete snippet in this step:

import requestsurl = "https://blog.langchain.com/what-is-an-agent/"response = requests.get(url)if response.status_code == 200: print(response.text[:500]) # print the first 500 characters of the pageelse: print(f"Request failed with status code: {response.status_code}")Step 3. Parse HTML with BeautifulSoup

Now that you extracted the raw HTML, it’s time to parse it with BeautifulSoup to fetch elements.

Here’s an example:

from bs4 import BeautifulSoupsoup = BeautifulSoup(response.text, "html.parser")print(soup.prettify())The .prettify() function here helps you print the entire HTML document in a nice format (with indentation). In case you just want to extract a specific element (e.g., title or headings), you can replace print(soup.prettify()) with the following commands:

To extract the title:

title = soup.title.stringprint("Page Title:", title)In order to extract the main heading:

heading = soup.find("h1").get_text()print("Main Heading:", heading)To extract all links:

for link in soup.find_all("a"): print(link.get("href"))Step 4. Extract Data by Tag, Class, and Attributes

You don’t have to hang onto the whole page once you’ve torn through the HTML. That’s just digital hoarding. Most sites flag the good stuff with tags and CSS classes anyway, so why not just grab exactly what you need? BeautifulSoup is your friend for this. Just tell it what tags or classes you’re after, and it’ll fetch the goods.

Like, in that LangChain blog post example, the content of the article lives inside a <div> with the class article-content. If you wanna snatch all the paragraphs from there, just use a simple command with BeautifulSoup to scoop up every <p> tag inside that section:

content_div = soup.find("div", class_="article-content")if content_div: for para in content_div.find_all("p"): print(para.get_text(strip=True))else: print("No article content found.")Step 5. Parse HTML with lxml and XPath

BeautifulSoup is ideal for simple scraping tasks, like searching for elements by IDs, tag names, or classes. But when the HTML structure is challenging or when you require complex queries and more precision, parse HTML with lxml and XPath.

Below is an example of finding prices with lxml and XPath:

from lxml import htmltree = html.fromstring(response.text)prices = tree.xpath('//span[@class="price"]/text()')print(prices)Step 6. Work with Dynamic Content using Selenium

Some websites load content with JavaScript. In this case, using requests alone won’t work for such dynamic content. That’s why you need to leverage Selenium, which simulates a real browser and allows you to access websites like humans do. Below are two applications of Selenium in extracting dynamic content:

Example: Implement a Google search

from selenium import webdriverfrom selenium.webdriver.common.keys import Keysdriver = webdriver.Firefox()driver.get("https://www.google.com")box = driver.find_element("name", "q")box.send_keys("Python web scraping" + Keys.RETURN)print(driver.title)driver.quit()Example: Scrape product details

Selenium can wait for elements to load and retrieve their text. This capability makes it helpful in e-commerce or test sites.

from selenium import webdriverfrom selenium.webdriver.common.by import Byfrom selenium.webdriver.chrome.service import Servicefrom selenium.webdriver.chrome.options import Optionsimport time# --- Setup Selenium ---chrome_options = Options()chrome_options.add_argument("--headless") # Run browser in backgroundchrome_options.add_argument("--disable-gpu")chrome_options.add_argument("--no-sandbox")service = Service("/path/to/chromedriver") # Change path to your chromedriverdriver = webdriver.Chrome(service=service, options=chrome_options)# --- Load Page ---url = "https://example-ecommerce.com/products" # Replace with a real e-commerce pagedriver.get(url)# Wait for JavaScript to load productstime.sleep(5) # --- Scrape Product Details ---products = driver.find_elements(By.CSS_SELECTOR, ".product-card") # Example selectorfor product in products: try: name = product.find_element(By.CSS_SELECTOR, ".product-title").text except: name = "N/A" try: price = product.find_element(By.CSS_SELECTOR, ".product-price").text except: price = "N/A" try: link = product.find_element(By.TAG_NAME, "a").get_attribute("href") except: link = "N/A" print(f"Name: {name}, Price: {price}, Link: {link}")# --- Quit ---driver.quit()webdriver.Chromehelps launch a Chrome browser.headlessruns Chrome invisibly (without GUI).driver.get(url)helps load the page.time.sleep(5)enables JS to finish loading (or you can use Selenium’sWebDriverWaitfor smarter waits).find_elements(By.CSS_SELECTOR, “.product-card”finds all product cards.

Step 7. Interact with HTML Forms (MechanicalSoup)

MechanicalSoup works best in filling and submitting forms. Let’s take a look at how it works through the following example:

import mechanicalsoupbrowser = mechanicalsoup.StatefulBrowser()browser.open("https://example.com/login")browser.select_form("form")browser["username"] = "myuser"browser["password"] = "mypassword"browser.submit_selected()Step 8. Automate UI Tasks with PyAutoGUI (optional)

In special cases, you may leverage PyAutoGUI to control mouse and keyboard actions. In other words, it allows you to scroll and click when scraping content that requires user-like behavior.

import pyautoguipyautogui.scroll(-500) # scroll downStep 9. Clean and Store Data

Once you have extracted the desired data, save it in a structured format by exporting it to CSV, JSON, or a database (such as SQLite or PostgreSQL). For example:

import csvwith open("data.csv", "w", newline="") as f: writer = csv.writer(f) writer.writerow(["Name", "Price"]) writer.writerow(["Laptop A", "$1000"])Step 10. Add Error Handling & Respectful Delays

You can add error handling and delays to avoid overwhelming servers. Here’s an example:

import timeimport randomtry: response = requests.get(url, timeout=10) response.raise_for_status() time.sleep(random.uniform(1, 3)) # random delayexcept requests.exceptions.RequestException as e: print("Error:", e)Practical Web Scraping Examples

Let’s be real, web scraping is a seriously powerful tool with tons of uses in the real world. To give you an idea, here are three practical examples that show just how valuable it can be for businesses to gather useful data.

Scraping news from websites

Ever wished you could see all the latest headlines in one spot? News scraping lets you do just that, so you don’t have to jump between a bunch of different sites. This means you can easily build your own personalized news aggregator, track stories from multiple sources, and even save articles for later analysis—like figuring out which keywords pop up most or gauging public sentiment.

For job seekers, trying to centralize all those different listings can be a major headache. Web scraping makes it a breeze to identify the best career opportunities. You can easily pull job titles, descriptions, company names, and locations from trusted platforms like Indeed or LinkedIn. This helps you create your own job-tracking dashboard, compare salary ranges across companies, and even get automatic alerts when new roles that fit your criteria pop up.

Tracking product prices on e-commerce sites

Trying to manually track product information—like prices, stock, and discounts—on e-commerce sites is a total nightmare. Why? Because they’re always changing! That’s where Python web scraping comes in, helping sellers and buyers stay competitive. For example, you can scrape different online stores to keep track of prices, so you’ll always know what’s happening in the market.

Common Challenges & How to Solve Them

Web scraping is powerful, but it does mean it has no limitations. Below are several common challenges you may face when extracting web data with Python or any programming language (like JavaScript or TypeScript), along with how to solve them:

Websites changing HTML structure

Websites often update or redesign their HTML templates. Minor changes in class names, wrappers, or nesting can make selectors like div.article-content or span.price outdated and lead to failures in automated extraction tasks.

Solutions: To solve this problem, you can:

- Use APIs when available, as APIs are versioned or more stable than HTML layouts.

- Write flexible selectors by using patterns like XPath with partial matches (e.g.,

//*[contains(@class, 'content')]) to tolerate minor changes. - Monitor and maintain by periodically testing your scraper and updating selectors when failures begin to surface.

- Use advanced tools like XTreePath, which can measure context similarity in the HTML tree to adapt to structural changes.

Rate limiting and IP bans

When websites detect abnormally high-frequency requests from a single IP, they can block access. This is because these sites often set rate limits or use firewalls to reject access once thresholds are exceeded. So, bad headers (like default Python User-Agents) and repetitive traffic patterns can easily lead to detection and blocking.

Solutions: To solve this challenge, you can:

- Add randomized delays between requests to simulate human browsing

- Rotate User-Agent headers (and perhaps other headers like Referer) to appear as different browsers or devices.

- Use IP rotation via proxies. Typically, proxy pools, especially rotating IPs, distribute requests and minimize detection risks, while residential proxies resemble real user traffic and have lower ban rates than datacenter proxies.

- Rotate cookies or sessions and simulate human-like browsing by maintaining session state across navigation.

Managing large-scale data scraping

When scaling up web scraping, you can face various challenges beyond detection, like performance, data management, and reliability. This is because scraping an increasing volume of pages from one machine can increase block risk and reliability breakdowns.

Solutions: To resolve this challenge, you can:

- Distribute scraping tasks across multiple servers or cloud functions to reduce load and per-IP risk.

- Use scraping APIs or pipeline services like Scraper API to manage proxies, process retries, and offer structured outputs. This will simplify scaling.

- Cache unchanged pages to avoid repeated downloads, and use

ETagorLast-Modifiedheaders to track updates. - Monitor scraping health by tracking success/failure rates, latency, and IP bans.

- Only use Selenium or Playwright on JavaScript-heavy pages; if not, using lightweight tools for speed and efficiency is enough.

Somebody essentially lend a hand to make significantly articles Id state That is the very first time I frequented your website page and up to now I surprised with the research you made to make this actual submit amazing Wonderful task

Your blog is a breath of fresh air in the crowded online space. I appreciate the unique perspective you bring to every topic you cover. Keep up the fantastic work!

Every time I visit your website, I’m greeted with thought-provoking content and impeccable writing. You truly have a gift for articulating complex ideas in a clear and engaging manner.

Your blog is a constant source of inspiration for me. Your passion for your subject matter shines through in every post, and it’s clear that you genuinely care about making a positive impact on your readers.